- Apr 7

81% of Physicians Are Already Using AI. So Why Is Healthcare Still Nowhere Near Scale?

Healthcare AI just crossed an important line.

Not the line most people think.

Yes, the American Medical Association’s 2026 physician survey shows that 81% of physicians now report awareness or use of AI in practice. Yes, the average number of AI use cases per physician has more than doubled, rising from 1.1 in 2023 to 2.3 in 2026. On the surface, that looks like a market moving cleanly into scale.

But that is not what the data actually says.

The real story is more important, and more revealing.

Healthcare has not solved the AI adoption problem. It has only solved the AI usefulness problem.

That distinction matters.

Because once a technology proves useful, the next barrier is not whether people like it. The next barrier is whether institutions can trust it enough to operationalize it, govern it, and live with the consequences when something goes wrong.

That is exactly where healthcare is now.

AI is getting into the workflow. It is not yet earning the operating model.

Look at where physicians are actually using AI.

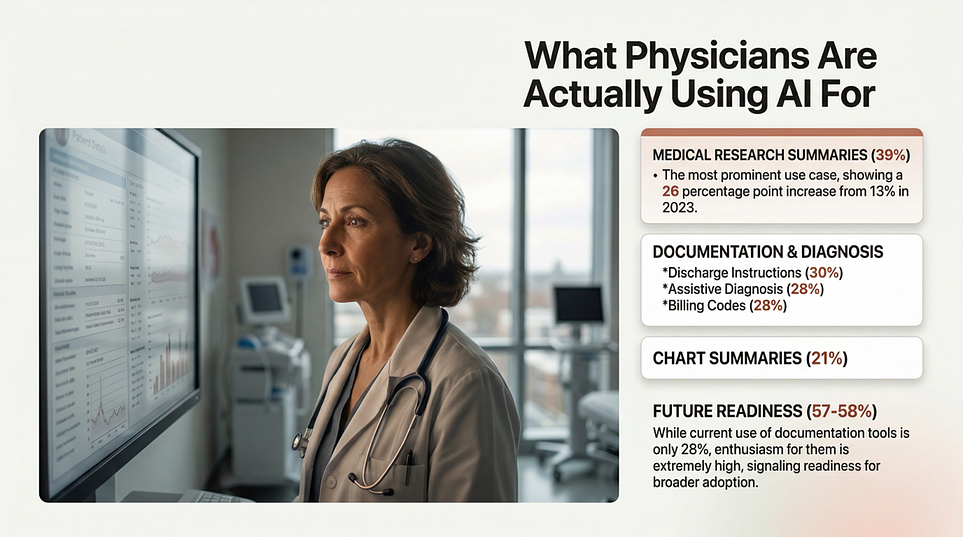

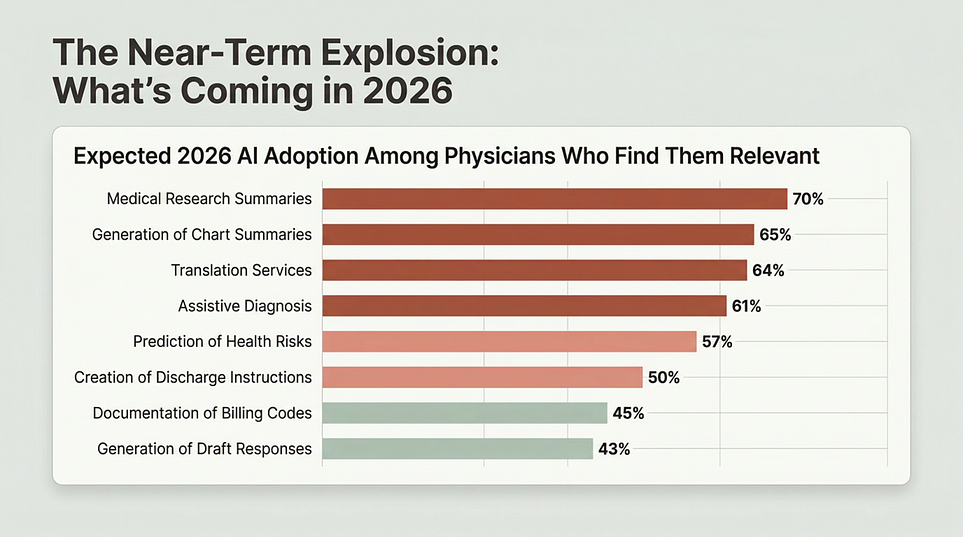

The leading use cases are summaries of medical research and standards of care (39%), creation of discharge instructions, care plans, and progress notes (30%), documentation of billing codes, medical charts, or visit notes (28%), and generation of chart summaries (28%). These are not fringe experiments. These are real workflow jobs inside the daily machinery of care delivery.

That pattern tells us something critical.

The first wave of healthcare AI is not about handing over clinical authority. It is about relieving workflow pressure.

AI is being adopted fastest where clinicians are drowning in documentation, inbox burden, information overload, and repetitive administrative drag. The technology is getting traction where it reduces friction, not where it asks physicians to surrender judgment.

That is a much more grounded story than the hype cycle suggests.

The market is not voting for “AI replaces clinicians.”

The market is voting for AI reduces the tax of being a clinician.

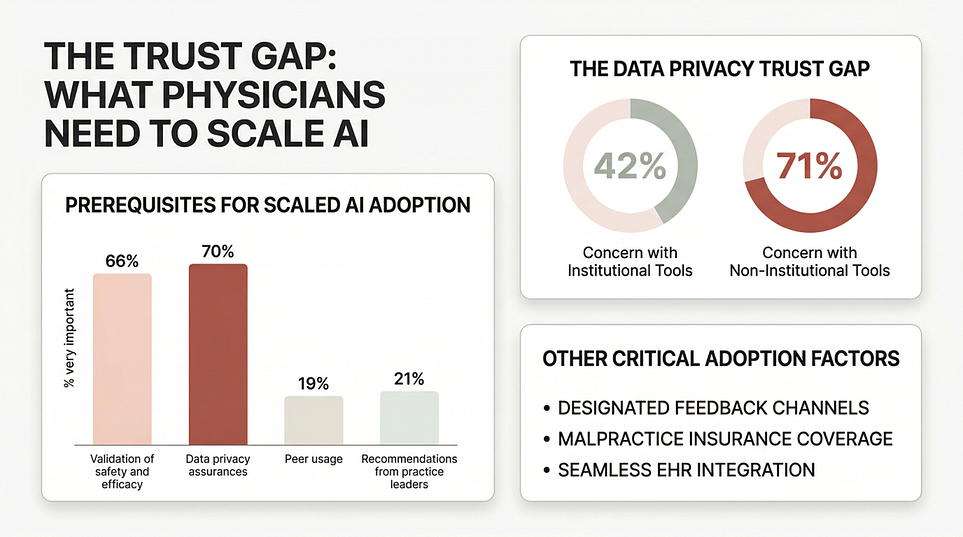

Physicians are not resisting AI. They are setting conditions for trust.

This is where the survey gets interesting.

Physician sentiment toward AI is increasingly positive. 76% say AI provides an advantage in their ability to care for patients, up from 65% in 2023. That is not skepticism. That is a meaningful shift in confidence.

But that confidence has guardrails.

A full 40% of physicians say they are equally excited and concerned about AI’s impact on practice. And among all the dimensions tested, patient privacy is the only one where more physicians expect harm than help.

That combination is the real headline.

Physicians are not saying no to AI. They are saying yes, but only if the surrounding system is trustworthy.

This is the point too many healthcare AI strategies still miss. They focus on model performance, feature lists, or demo quality. Physicians are signaling something else entirely. They want proof that the system will behave safely, preserve confidentiality, fit the workflow, and remain governable after deployment.

That is not a product problem alone.

That is an engineering discipline problem.

The next bottleneck is not intelligence. It is trust architecture.

When the survey asks what would facilitate adoption, the top answers are strikingly practical: validated safety and efficacy, data privacy assurances, a way to provide feedback when issues arise, coverage under standard malpractice insurance, and seamless EHR integration.

That list should reset the boardroom conversation.

Healthcare AI will not scale on usefulness alone. It will scale when trust becomes operational.

And the privacy numbers make that even clearer. Physicians report much higher privacy concern for non-institutional AI tools (71%) than for institutional tools (42%).

That is not a small detail. It is a blueprint.

It means adoption changes materially when AI moves from “a clever external tool” to “a governed institutional capability.” The trust boundary matters. Sponsorship matters. Control matters. Integration matters.

In other words, healthcare does not just need AI tools.

It needs AI systems wrapped in real trust architecture.

Identity. Access control. Auditability. Evidence trails. Monitoring. Escalation paths. Clear boundaries on where AI can support, draft, recommend, automate, or never act.

That is the difference between a pilot and a production system.

Liability is becoming the hidden variable behind adoption

If there is one finding executives should not ignore, it is this: clear liability frameworks rank as the most important regulatory action for increasing trust in and adoption of AI tools. Post-market surveillance, adverse event reporting, and ongoing oversight rank close behind.

This is a profound signal.

Healthcare is not merely asking whether AI works.

Healthcare is asking who is accountable when it fails.

Who owns the error?

Who detects degradation?

Who monitors performance after go-live?

Who intervenes when behavior drifts?

Who carries responsibility when privacy, safety, or efficacy are compromised?

These are not legal afterthoughts. They are adoption enablers.

The industry has spent years talking about AI governance as if governance were mostly about policies, committees, and review gates. But the survey points to something more mature.

Healthcare wants governance that continues after deployment, not just before it. It wants accountability in operation, not just approval on paper.

That is the real dividing line between model governance and production governance.

Burnout is the opportunity. Deskilling is the fear.

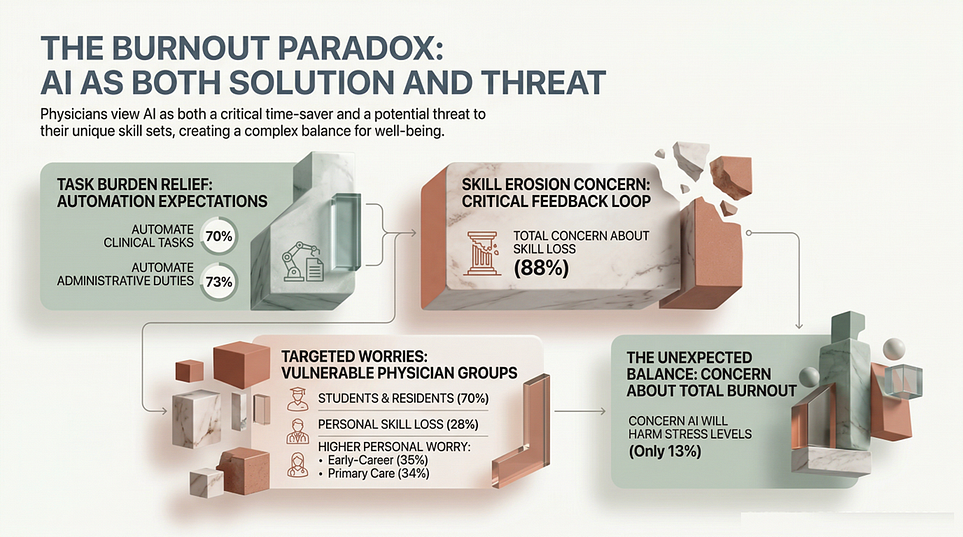

The survey captures another tension that will define the next phase of adoption.

On one side, the value case is compelling. 70% of physicians believe AI can help automate clinical tasks contributing to burnout, and 73% believe it can reduce administrative workload.

On the other side, the profession is uneasy about what that delegation does over time. 88% say they are very, somewhat, or mildly concerned about skill loss, especially among trainees and early-career physicians.

This is the trap.

If healthcare uses AI to remove burden but gradually erodes clinical judgment, interpretive skill, or training quality, then the short-term efficiency win becomes a long-term capability loss.

The winners in healthcare AI will be the organizations that solve both sides of the equation at once:

reduce burden without eroding professional capability.

That requires far more than plugging in a tool. It requires intentional design of authority, oversight, escalation, review, and learning loops.

Patients are already bringing AI into the exam room

One of the most underrated findings in the report has nothing to do with clinicians using AI. It has to do with patients.

Only 8% of physicians say most of their patients disclose AI use, but 30% believe most patients are probably using AI. Physicians are broadly comfortable with patients using AI for general health questions and medication questions, but far less comfortable with AI for radiology or pathology interpretation.

This means patient AI is already part of the care environment, often invisibly.

So healthcare leaders now face a broader challenge than internal tool adoption. They have to decide how the institution will respond when patients arrive with AI-generated interpretations, recommendations, and misunderstandings already shaping the clinical encounter.

That is not just a digital front-door issue.

It is now part of the care operating model.

The real lesson

The AMA survey does not show that healthcare AI has reached maturity.

It shows that healthcare AI has entered its most consequential phase.

Usefulness is now proven.

Interest is now proven.

Demand is now proven.

What is not yet proven is whether healthcare organizations can engineer the operating conditions required for trustworthy scale.

That means:

building privacy, safety, and evidence into the architecture

defining authority boundaries clearly

monitoring performance in production

creating liability clarity and feedback loops

embedding training into workflows

keeping physicians directly involved in adoption decisions

The survey strongly supports that last point. 85% of physicians want to be consulted on or responsible for AI adoption in their practice, and 92% want more education and training on AI.

That is the message leaders should hear.

Healthcare is not asking for more AI hype.

It is asking for a production-grade discipline for designing, governing, and operating AI in environments where trust, safety, and accountability are non-negotiable.

That is why the next chapter of healthcare AI will not be won by whoever ships the flashiest model.

It will be won by whoever builds the most trustworthy system around it.

What Healthcare Needs Now Is Not More AI Hype. It Needs an Engineering Discipline

If you are a healthcare leader, platform provider, regulator, or enterprise team building AI for clinical environments, this is the point where the conversation has to change.

The challenge is no longer whether AI can be useful.

The challenge is whether AI can be deployed, governed, and scaled in environments where privacy, safety, accountability, clinical judgment, and institutional trust are non-negotiable.

That is not a feature rollout problem.

That is an engineering discipline problem.

It is a governance problem.

It is an operating model problem.

And increasingly, it is a runtime trust problem.

Healthcare will not scale AI through enthusiasm alone. It will scale AI when organizations adopt rigorous methods for designing systems with clear authority boundaries, evidence requirements, oversight mechanisms, monitoring loops, and accountable operating practices in production.

That is exactly why Agentic Engineering Institute (AEI) was created.

AEI exists to help organizations adopt the best practices of Agentic Engineering: the AI-native engineering discipline for building, governing, and operating production-grade agentic and augmented systems in the enterprise.

To help leaders get started, AEI offers free downloads of three foundational public frameworks:

Together, they provide a practical starting point for teams that need more than AI ambition. They need a structured way to move from experimentation to trustworthy scale.

If healthcare wants to accelerate value creation from AI without increasing operational fragility, governance gaps, or trust breakdowns, this is the work ahead.

Join AEI or partner with AEI to adopt the standards, frameworks, and best practices needed to engineer healthcare AI that is not only useful, but trustworthy, governable, and ready for production.

Because healthcare will not scale AI by accident.

It will scale AI by discipline.

Free AEI Newsletters

Expert insights and updates on Agentic Engineering—delivered straight to your inbox.