- Sep 26, 2025

MIT Says 95% of AI Pilots Fail. McKinsey Explains Why. Agentic Engineering Shows How to Fix It.

- AEI Digest

- 0 comments

Why most AI pilots collapse in production — and how a new discipline, Agentic Engineering, closes the gap.

The AI copilot dazzled in the demo.

It summarized calls, drafted emails, even suggested pipeline moves.

Six months later, the glow was gone. Usage cratered. API bills soared. Customers stopped trusting it.

This isn’t an isolated story. MIT’s 2025 State of AI in Business report confirmed the pattern: 95% of GenAI pilots fail to scale.

The reasons are painfully consistent. McKinsey’s six key lessons point to the same gaps: agents that can’t be trusted, architectures that collapse under real use, governance that arrives too late, and economics that don’t hold.

The uncomfortable truth? Today’s AI doesn’t fail because the models are weak. It fails because the discipline is missing.

That’s why I wrote my new book:

📘 Agentic AI Engineering: The Definitive Field Guide to Building Production-Grade Cognitive Systems

Nearly 600 pages. 24 chapters. 19 practice areas.

It’s not another collection of prompts or tools. It’s the first discipline for building AI systems that survive contact with the real world.

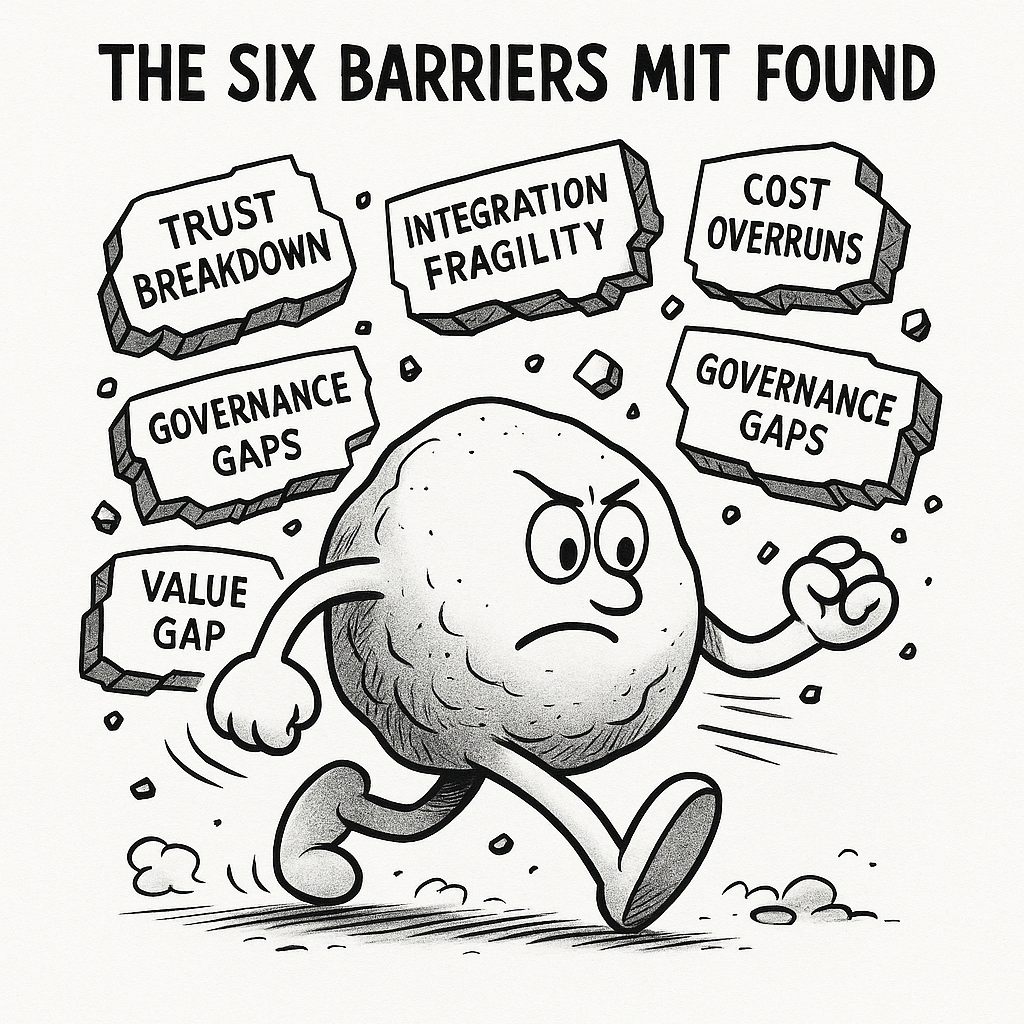

Why AI Pilots Fail: The Six Barriers MIT Found

MIT’s 2025 State of AI in Business didn’t just throw out the shocking 95% failure rate. It pinpointed why GenAI pilots collapse when they leave the demo stage.

Here are the six barriers that show up again and again in enterprises:

Trust Breakdown: Agents hallucinate, drift silently, or act like black boxes. Teams can’t see or steer why decisions are made.

Integration Fragility: Brittle APIs and vendor lock-in turn every system change into a hidden failure.

Cost Overruns: Runaway retries, wasted context windows, and ballooning API bills make pilots uneconomical.

Governance Gaps: Compliance, audit trails, and safety checks are bolted on after the fact, if at all.

Value Gap: Outputs may look impressive in a demo, but they don’t translate into business outcomes.

Operational Fragility: Agents fail quietly in production, with no alerts until trust (and customers) are already lost.

Each barrier is more than a bug; it’s a systemic fault line. Together, they explain why so many organizations are stuck in pilot purgatory.

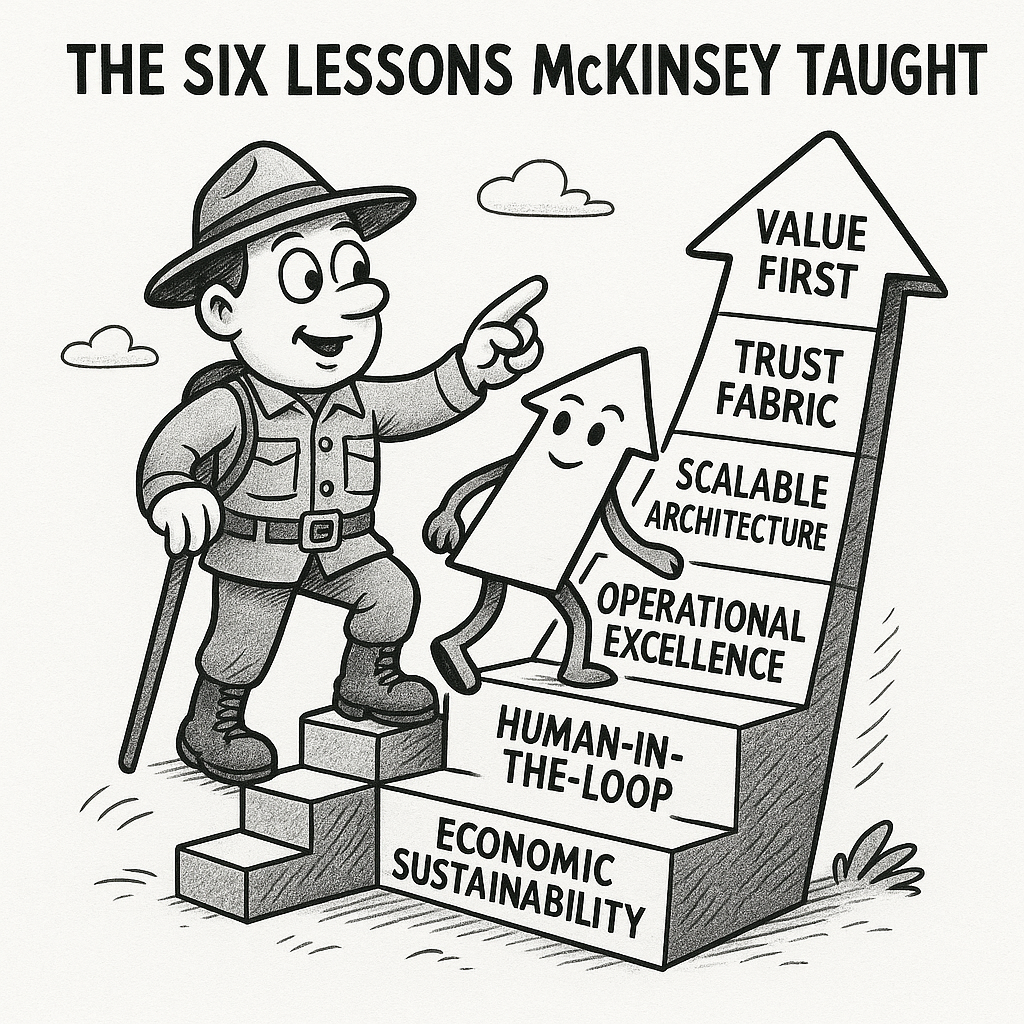

How to Survive AI Failure: The Six Lessons McKinsey Taught

If MIT showed why most AI pilots collapse, McKinsey’s six key lessons revealed what it takes to survive. These aren’t abstract ideas; they’re survival rules for any enterprise trying to move from fragile demos to production-grade systems.

Value First: Prove outcomes, not just outputs. Time saved, errors avoided, workflows scaled.

Build a Trust Fabric: Governance, transparency, and auditability aren’t backend chores; they must be visible product features.

Engineer Scalable Architecture: Modularity, swap-ability, and resilience prevent agents from collapsing when tools or APIs shift.

Operational Excellence: Observability, monitoring, and continuous QA turn silent failures into recoverable incidents.

Human-in-the-Loop Oversight: Steerability is non-negotiable. Agents must show their reasoning so people can intervene.

Economic Sustainability: ROI must be provable. Without cost discipline, pilots burn capital faster than they create value.

McKinsey’s point is clear: AI doesn’t scale on speed alone. It scales on systems that deliver value, earn trust, and sustain economics.

The Missing Discipline: Why Agentic Engineering Is the Fix

MIT named the failures.

McKinsey named the lessons.

But naming isn’t enough.

What enterprises lack is a discipline — a structured way to design, govern, and operate AI systems so they survive contact with the real world.

That discipline is Agentic Engineering.

It is not about clever prompts or one-off copilots. It is the systematic practice of engineering cognition in motion — turning fragile demos into production-grade, enterprise-grade, and regulatory-grade systems.

Across 24 chapters and 19 practice areas, my new book delivers a full blueprint — complete with the Agentic Stack, maturity ladders, design patterns and anti-patterns, field lessons, best practices, case studies, and code examples. Every chapter is designed to seal fault lines MIT exposed while embedding the survival principles McKinsey taught.

Agentic Engineering is not another toolchain. It is the operating manual for the AI era.

Closing the Gap: How Agentic Engineering Maps to MIT and McKinsey

If MIT exposed the cracks and McKinsey offered the survival rules, then Agentic Engineering is the discipline that stitches them together.

Each of the book’s 24 chapters and 19 practice areas directly addresses the barriers MIT identified, while embedding the six principles McKinsey outlined. This is where diagnosis becomes cure.

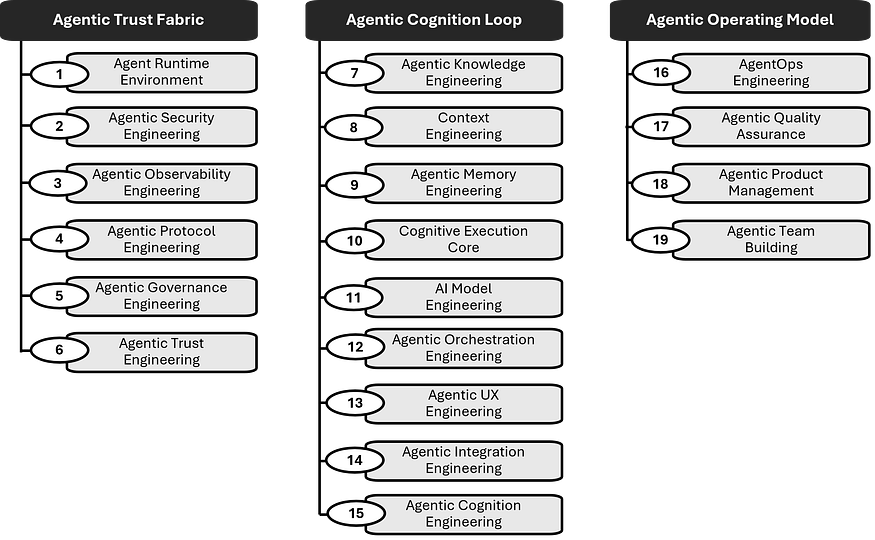

Agentic Engineering consists of 19 engineering practice areas.

Early Chapters: Naming the Fault Lines

The opening chapters (1–4) confront the uncomfortable truth: agents don’t fail because the models are dumb, they fail because the architecture is brittle. These chapters surface the 10 fault lines MIT hinted at — context collapse, leaky memory, silent drift — and introduce the Agentic Stack and maturity ladders as systematic ways to close them. In McKinsey’s terms, this is where value focus and scalable architecture begin.

Runtime Foundation: Engineering the Frame

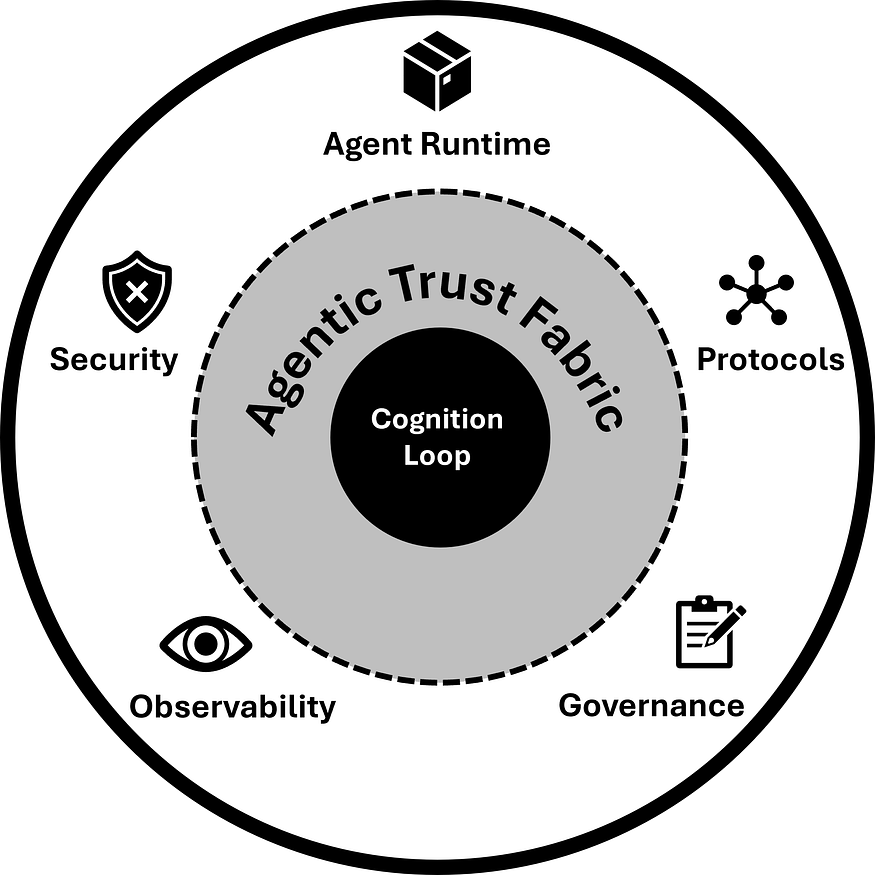

Chapters 5–10 lay down the guardrails — Agent Runtime Environment (ARE), security, observability, governance, protocols, and trust. These disciplines tackle MIT’s integration fragility, governance gaps, and operational fragility head-on. They also bring to life McKinsey’s trust fabric and operational excellence, making oversight and auditability first-class citizens of the system instead of afterthoughts.

The Agentic Runtime Foundation in Agentic Engineering

Cognition Loop: Sealing the Leaks

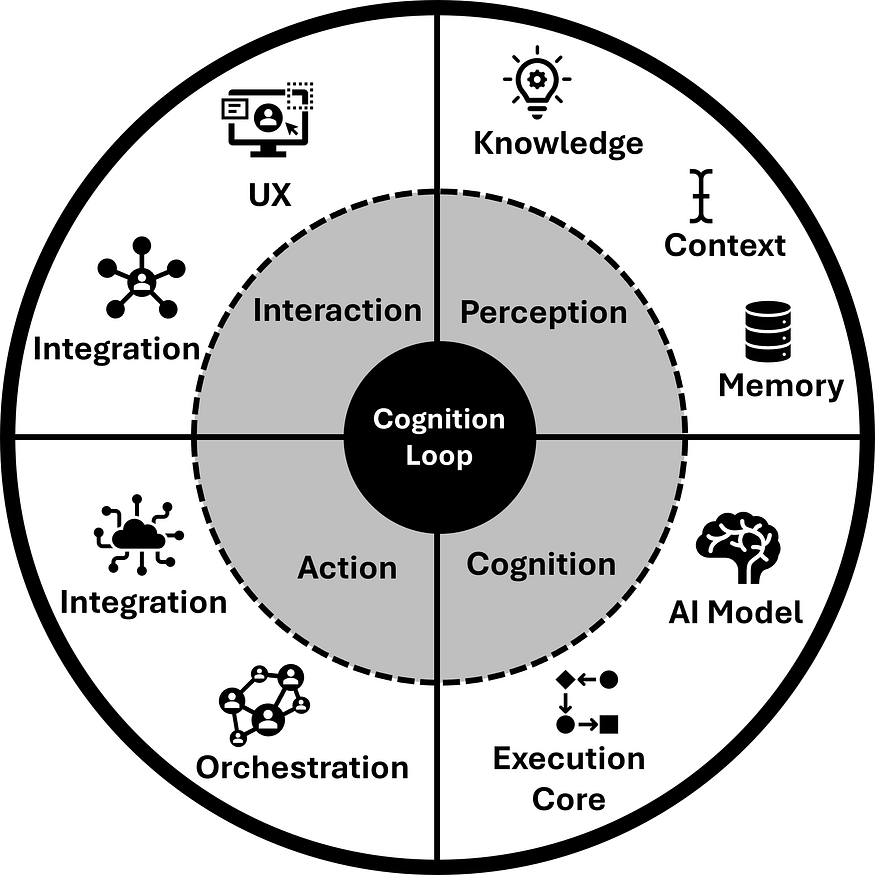

Chapters 11–19 move into the heart of cognition: knowledge, context, memory, models, orchestration, UX, and integration. Each area fixes a specific MIT fault line. Context and memory chapters, for instance, prevent drift and scope creep that lead to runaway costs. Orchestration and integration chapters address the vendor lock-in and brittle API problem that MIT highlighted. And across all of them, McKinsey’s calls for economic sustainability and human-in-the-loop oversight are embedded as design principles, not patches.

The Agentic Cognition Loop in Agentic Engineering

Practice in Motion: Operating Autonomy

The final practice chapters (20–23) turn theory into operations. AgentOps addresses MIT’s “silent failures” by making reasoning, retries, and recoveries observable in real time. Agentic QA shifts quality from “does it run” to “can it be trusted,” answering McKinsey’s call for a trust fabric. Agentic Product Management reframes roadmaps around value, moats, edge, and trust instead of features — a direct response to MIT’s value gap and McKinsey’s ROI discipline. And Agentic Teams shows how to organize humans — operators, engineers, strategists — to sustain autonomy as a practice.

Looking Ahead: Beyond the Enterprise

Chapter 24 lifts the lens to the future. MIT’s study stopped at enterprise-scale cracks. But the next wave — ecosystemic and operating-systemic intelligence — raises new questions: how do we connect agents across companies, industries, and societies? This is where Agentic Engineering prepares the ground, ensuring the same principles of trust, governance, and sustainability scale beyond single organizations.

Taken together, the chapters are not a grab bag of practices. They form a new discipline that closes every failure mode MIT named while embedding every survival principle McKinsey prescribed.

Why This Matters to You

The gaps MIT exposed aren’t abstract. They show up in your daily work, whether you’re writing code, managing products, or leading strategy. Agentic Engineering matters because it turns those gaps into practices you can actually apply.

If you’re an AI engineer or software engineer

Stop firefighting brittle prompts and one-off scripts. Learn how to build agents with real memory boundaries, context budgets, and orchestration patterns that don’t collapse in production.If you’re a product manager

Features won’t save you. Competitors can clone them in weeks. What lasts are moats: proprietary workflows, feedback loops, evaluation pipelines. Agentic Product Management (Chapter 22) shows you how to turn cognition into durable advantage.If you’re an executive

You don’t need another demo. You need systems that pass audits, prove ROI, and scale without ballooning costs. The book equips you with maturity ladders, governance blueprints, and field-tested lessons to guide your enterprise from fragile pilots to production-grade autonomy.

This isn’t just about avoiding failure. It’s about shaping the next discipline of technology and positioning yourself at the forefront of it.

Where to Go Next

MIT has shown us why AI pilots fail.

McKinsey has shown us what to focus on.

Now the discipline of Agentic Engineering shows you how to fix it.

We are the founding generation. The discipline is being born. The only question left is whether you will help shape it — or be left behind.

📘 Agentic AI Engineering: The Definitive Field Guide to Building Production-Grade Cognitive Systems

You can download the eBook or get a print copy on Amazon.

Nearly 600 pages. 24 chapters. 19 practice areas. Packed with the Agentic Stack, maturity ladders, patterns and anti-patterns, field lessons, case studies, and code examples.

👉 For teams ready to scale their skills, join the Agentic Engineering Academy to future-proof your talent.

Takeaway:

95% of AI pilots fail because we lack a discipline.

Agentic Engineering is that discipline — transforming fragile demos into production-grade, enterprise-grade, and regulatory-grade systems.

If you found value in this article, I’d be grateful if you could show your support by liking it and sharing your thoughts in the comments. Highlights on your favorite parts would be incredibly appreciated! For more insights and updates, feel free to follow me on Medium and connect with me on LinkedIn. If your organization needs support on AI transformation, please contact me directly at yizhou@argolong.com.

References and Further Reading

Yi Zhou. “Agentic AI Engineering: The Definitive Field Guide to Building Production-Grade Cognitive Systems.” ArgoLong Publishing, September 2025.

McKinsey. “One year of agentic AI: Six lessons from the people doing the work.” September 2025.

MIT NANDA. “The GenAI Divide: STATE OF AI IN BUSINESS 2025”. July 2025.

Yi Zhou. “New Book: Agentic AI Engineering for Building Production-Grade AI Agents.” Medium, September 2025.

Yi Zhou. “Every Revolution Demands a Discipline. For AI, It’s Agentic Engineering.” Medium, September 2025.

Yi Zhou. “Software Engineering Isn’t Dead. It’s Evolving into Agentic Engineering.” Medium, September 2025.

Yi Zhou. “Agentic AI Engineering: The Blueprint for Production-Grade AI Agents.” Medium, July 2025.

MIT Says 95% of AI Pilots Fail. McKinsey Explains Why. Agentic Engineering Shows How to Fix It. was originally published in Agentic AI & GenAI Revolution on Medium, where people are continuing the conversation by highlighting and responding to this story.