- Apr 24

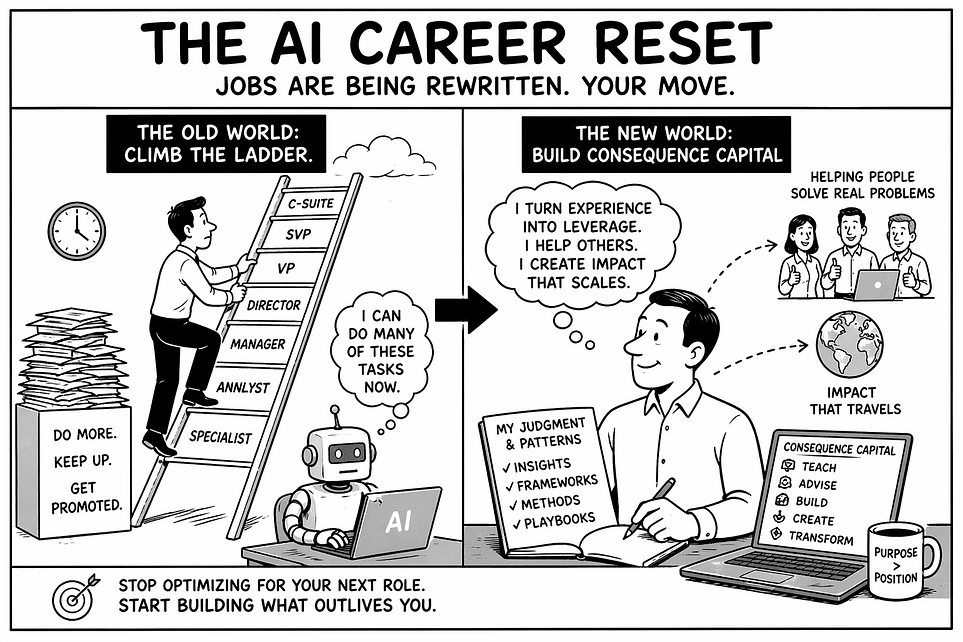

AI Disruption Is Rewriting Jobs and Careers. Winners Will Find the Hidden Leverage.

The Career Bargain Is Breaking

The career you spent years building may be less defensible than the work it taught you to do.

That is the uncomfortable truth AI is forcing into the open. The resume may still look impressive. The title may still signal progress. The experience may be real. By the old rules, this should be enough. For decades, the formula was clear: get a good job, work hard, become excellent, climb the ladder, earn bigger titles, and build a successful career. It was not perfect, but it gave ambition a structure and professionals a promise.

The promise was simple: if you became valuable to the system, the system would reward you.

AI is breaking that promise.

Not because every job will disappear. That is the shallow version of the story. The deeper disruption is more unsettling: AI is changing the market value of professional work itself. It is separating work that merely produces output from work that carries judgment. It is exposing which parts of a career were built on repeatable tasks, institutional position, and polished execution rather than on insight that is difficult to reproduce.

For years, professional outputs were treated as proof of capability: the memo, the market scan, the project update, the slide deck, the meeting summary, the first draft of code, the strategy note, the executive briefing. These artifacts mattered because they took time, training, context, and coordination to produce. They signaled competence. They made people visible inside organizations. They helped justify roles, promotions, budgets, and authority.

Now many of those same outputs can be generated in seconds.

That does not make human expertise irrelevant. It makes generic expertise exposed. The anxiety many professionals feel is not simply fear of automation. It is the recognition that some of the work that made them look valuable may soon become abundant, inexpensive, and expected by default.

This is why AI feels different from earlier waves of technology. It is not only improving productivity at the margins. It is moving directly into the visible layer of knowledge work, the layer where professionals have historically demonstrated value. When AI can produce a credible first draft, a coherent analysis, a polished slide, or a plausible recommendation, the market begins to ask a harder question: what is the human adding beyond the output?

That question changes the meaning of a career.

A title can be reorganized away. A job description can be decomposed. A function can be redesigned. A career built mainly around repeatable outputs can lose pricing power faster than the person holding that career expected. The old question was, “How do I climb higher?” The new question is more uncomfortable:

what have I learned from the climb that AI cannot easily reproduce?

That is the career reset.

AI is not asking whether you are experienced. It is asking whether your experience has become leverage.

AI Attacks the Generic First

AI does not attack all work equally. It attacks the generic first.

It moves fastest where work follows familiar patterns, uses standard language, depends on templates, and can be judged as “good enough” without deep context. Generic writing. Generic analysis. Generic reporting. Generic coding. Generic coordination. Generic consulting slides. Generic management language. Generic expertise that sounds polished but carries little rare judgment.

This is why the disruption feels so personal for knowledge workers. For years, many professionals built credibility by becoming excellent at the visible artifacts of work. They knew how to write the memo, structure the deck, summarize the meeting, prepare the update, synthesize the research, frame the recommendation, and communicate in language that sounded credible to executives and clients.

Those skills still matter. But they are no longer scarce in the same way.

AI has made the first draft abundant. It has made polished language cheap. It has made average analysis faster. It has made competent output easier to produce at scale. The market will still need these outputs, but it will become less willing to pay a premium for humans who only produce what AI can generate, revise, and improve quickly.

The uncomfortable part is that generic work often hides inside impressive careers.

A person may have a respected title, years of experience, and a strong reputation, yet much of their visible work may still be built around repeatable outputs. They attend meetings, turn ambiguity into slides, translate decisions into documents, coordinate across teams, write updates, produce recommendations, and keep the machinery moving. In the old system, that looked like high-value knowledge work. In the AI system, much of it becomes easier to reproduce.

That does not mean the person has no value. It means the deeper value may not be visible.

This is the real career risk. AI does not have to replace your whole job to weaken your market position. It only has to replace the part of your work the market can easily recognize, compare, and price. If the visible surface of your work looks generic, the market may begin to assume your value is generic too.

The professionals who struggle most may not be the least intelligent or least hardworking. Many will be excellent by yesterday’s standards. They will be reliable, articulate, experienced, and productive. But if their main contribution is a better version of what AI can already produce, they will be pulled into a new kind of competition, not against another professional with a stronger resume, but against abundance itself.

That is why “be excellent at your job” is no longer enough as a career strategy. Excellence still matters. But excellence in generic work becomes harder to defend when the baseline keeps rising and the cost of production keeps falling.

The better question is not, “Can AI do my job?”

That question is too broad to be useful.

The better question is:

Which parts of my work lose value when competent output becomes abundant?

Once you ask that, the career landscape changes. Some work gets compressed. Some work becomes automated. Some work becomes table stakes. But some work becomes more valuable because AI makes output cheaper while making real judgment more important.

The dividing line is not human versus machine.

The dividing line is generic output versus earned judgment.

The Hidden Leverage: Consequence Capital

If AI makes generic output abundant, the next career question is not simply how to work harder, learn faster, or use better tools.

The deeper question is more uncomfortable:

what part of my value is invisible until something important is at risk?

That is where durable professional advantage often lives. Not in the polished output. Not in the title. Not in the language of expertise. It lives in the judgment a person develops after reality has pushed back enough times to leave patterns.

I call this Consequence Capital.

Consequence Capital is the accumulated judgment a person earns by repeatedly working through real problems under real constraints, where decisions produce consequences, failures leave patterns, and experience becomes reusable insight.

It is not just knowledge. Knowledge can be searched, summarized, and generated.

It is not just experience. Experience can repeat for years without compounding.

It is not just seniority. Seniority gives authority, but authority is not the same as judgment.

Consequence Capital is what you know because you have seen what happens when ideas meet reality.

It is the judgment that forms after watching a technically elegant solution fail in production. It is the pattern recognition that comes from seeing the same organizational failure appear in different industries under different names. It is the instinct that tells you a strategy will not survive the budget cycle, a governance model will collapse at runtime, a product launch will miss adoption, or a transformation program is confusing activity with progress.

This kind of judgment is hard for AI to reproduce reliably, but not for mystical reasons. The reason is technical.

AI systems do not reason in a vacuum. They reason over available context: the prompt, retrieved documents, tool outputs, memory, system instructions, examples, traces, and feedback signals. When the right context is captured and engineered well, AI can be extremely powerful. It can help analyze tradeoffs, compare options, identify patterns, generate hypotheses, and accelerate expert work.

But much of what makes expert judgment valuable is not automatically available as context.

The failed attempt nobody documented is not in the retrieval index. The political constraint nobody writes down is not in the prompt. The customer trust issue hidden behind a clean metric is not visible to the model. The regulatory interpretation that only matters in practice may not be captured in a policy document. The budget pressure that changes the real decision may never appear in the project plan. The organizational scar tissue that explains why the “obvious” solution will not work is often distributed across people, meetings, history, incentives, and memory.

This is the context gap.

AI can summarize the best practice. Consequence Capital tells you when the best practice is likely to fail.

AI can generate the strategy memo. Consequence Capital tells you which part of the strategy the organization is not ready to execute.

AI can produce the project plan. Consequence Capital tells you where the plan will break when incentives, politics, technical debt, customer behavior, regulation, and human resistance collide.

The issue is not that AI cannot reason. It is that reasoning quality depends on context quality. If the relevant context is missing, stale, distorted, ungrounded, or disconnected from outcomes, the model may produce an answer that sounds intelligent while missing the constraint that determines the result.

That is why context engineering matters so much in the AI era. To reproduce expert judgment, an AI system needs more than a large model and a prompt. It needs access to the right operating context, reliable memory, provenance, domain-specific feedback, outcome traces, evaluation criteria, and mechanisms to distinguish what merely sounds plausible from what has survived reality.

Without those, AI can imitate the language of experience, but it cannot reliably manufacture consequence-tested judgment.

This is not a claim of permanent human superiority. It is a claim about system design. AI can amplify Consequence Capital when humans and organizations capture it, structure it, validate it, and connect it to feedback loops. But it does not automatically create that capital from generic data.

That distinction matters.

A professional’s advantage is not simply that they “know more.” AI will know more. The advantage is that they have earned judgment through consequences, and then learned how to make that judgment portable.

Capital becomes powerful when it can be invested and compounded. A repeated warning becomes a diagnostic. A recurring pattern becomes a framework. A hard-earned lesson becomes a playbook. A private method becomes a course, standard, tool, or community.

That is the hidden leverage.

The safest professionals in the AI era will not be the ones who merely accumulate experience. They will be the ones who turn consequence-tested judgment into assets that AI can help scale, but cannot automatically invent.

My Own Reset: From Career Ladder to Consequence Capital

I learned this before I had a name for it.

For much of my career, I thought I was climbing a ladder. I moved from research scientist to engineer, architect, director, CTO, CIO, consulting leader, founder, and eventually founder and CEO of the Agentic Engineering Institute (AEI).

On paper, it looked like progression: bigger roles, broader scope, more authority, and more rooms where important decisions were made.

But looking back, the real story was not promotion. It was accumulation.

Each role gave me a different lens.

Science taught me rigor.

Engineering taught me how ideas become systems.

Architecture taught me how systems break when data, workflows, security, users, and operations collide.

Executive leadership taught me how organizations actually make decisions through budgets, incentives, timing, risk, politics, and trust.

Consulting taught me to see patterns across companies that described their problems differently but often failed for the same structural reasons.

Over time, those experiences converged around one question:

Why do intelligent organizations struggle to turn AI ambition into reliable, governed, scalable value?

The answer did not come from theory. It came from seeing the same pain points again and again.

Leaders were under pressure to “do AI,” but lacked a clear operating model. Teams launched impressive pilots that never became production systems. Companies bought tools before building the capability to use them well. Governance looked complete in documents but failed to shape runtime behavior. Organizations confused experimentation with adoption, adoption with value, and value with scale.

Eventually, the pattern became impossible to ignore.

Enterprises were trying to build agentic systems with disciplines that were not designed for agentic behavior.

Traditional software engineering was built for systems whose behavior is mostly specified in advance. Machine learning practice was built around data, models, training, evaluation, and deployment. AI governance was largely built around policies, principles, model oversight, and risk reviews. All of that still matters. But none of it fully answers the new problem: systems that reason, use tools, adapt to context, interact with workflows, and operate under delegated authority at runtime.

That gap became the foundation of Agentic Engineering.

I did not create Agentic Engineering because the world needed another AI phrase. I created it because I kept meeting people who were struggling with the same AI transformation problems. They had ambition, budget, talent, and pressure, but they lacked a disciplined way to move from experimentation to operating value.

My calling became clear: help them succeed.

That meant taking the “secret sauces” I had learned through consulting work, enterprise deployments, architecture reviews, governance debates, and transformation programs, and turning them into something others could actually use. Not vague advice. Not another inspirational AI story. Practical methods. Standards. Frameworks. Training. Certification. Operating practices.

The question was no longer only, “Can the model answer?”

The harder question was:

Can the AI systems reason, act, recover, comply, explain, and create value under real operating constraints?

That required a new engineering lens. Cognition had to be treated as a system property, not a demo feature. Context, memory, orchestration, observability, security, governance, and trust had to become first-class engineering concerns. AI adoption had to move beyond scattered pilots toward production-grade, enterprise-grade, and eventually regulatory-grade systems.

AI accelerated this transition for me.

Before this wave of AI, my experience could still live inside roles: executive, advisor, consultant, founder. I could help one organization, one leadership team, one architecture review, or one transformation program at a time. But as AI began compressing generic knowledge work, the limitation became obvious: expertise trapped in my calendar was not enough.

If the patterns were real, they needed to become methods. If the pain points were recurring, they needed to become practices. If the secret sauces were useful, they needed to be shared. If the judgment was valuable, it needed to travel without me being in every room.

That was the shift from career ladder to Consequence Capital.

For me, that became the Agentic Engineering Institute: the institutional home for a discipline built to help people and organizations struggling with AI transformation turn confusion into capability, pilots into production, and ambition into governed, scalable value.

At the beginning, I thought I was building a career.

Now I see I was collecting the judgment that had to become useful beyond me.

What People Should Do Now

The AI career reset is not a reason to panic. It is a reason to reposition.

The wrong response is to defend the old job description. The better response is to examine what the job has been teaching you. Every serious career contains two kinds of work:

the visible outputs you produce and the deeper judgment you develop while producing them.

AI will compress many of the outputs.

The opportunity is to convert the judgment into leverage.

Start with a simple audit.

What do people repeatedly ask you to judge, not just produce?

What failures can you predict before others see them?

What do you understand because you have lived the consequences?

Where do you keep giving the same advice in different situations?

What feels obvious to you but confusing to others?

Those questions matter because they point to the part of your experience that may be more valuable than your current role. They reveal the patterns you have earned, not just the tasks you perform.

Then ask the harder question:

What part of my judgment is still trapped inside conversations, meetings, emails, and private advice?

That is where your leverage is leaking.

If you keep explaining the same pattern, write the framework.

If you keep warning people about the same failure, build the diagnostic.

If you keep helping teams avoid the same mistake, create the checklist.

If you keep teaching the same method, turn it into a playbook, course, standard, tool, or community.

The format is less important than the shift.

A repeated opinion can become a point of view. A point of view can become a framework. A framework can become a diagnostic. A diagnostic can become a method. A method can become something others can learn, apply, improve, and scale.

This does not mean everyone needs to become a founder, creator, author, or public personality. Productizing your judgment does not require turning your life into content. It means making your best thinking more portable, reusable, and useful to others.

Inside a company, that may become a better operating model, onboarding system, decision framework, quality checklist, governance process, or internal playbook.

Outside a company, it may become a niche advisory practice, training program, research agenda, software product, professional community, or new category of work.

The key is to stop treating experience as something that only lives on your resume.

Experience becomes Consequence Capital only when it compounds. It compounds when you name the pattern, clarify the method, test it with others, improve it through feedback, and make it useful beyond your own presence.

AI can help with that. It can help you draft, structure, pressure-test, refine, teach, and distribute your ideas. But AI cannot decide which consequences shaped you, which patterns you have earned, or which judgment deserves to become an asset. That part is still yours.

So here is the challenge:

In the next seven days, choose one judgment you keep using privately and turn it into something others can use.

Write the one-page framework. Build the checklist. Sketch the diagnostic. Record the short lesson. Publish the point of view. Create the operating guide. Teach the pattern to one team. Test whether it helps someone make a better decision or avoid a real mistake.

Do not wait until it is perfect. Perfect is not the point. Portable is the point.

The old career strategy was to become valuable enough to be promoted.

The new career strategy is to become useful enough to be portable.

Do not just ask, “What is my next role?”

Ask the more important question:

What have I learned through consequences that deserves to become useful beyond me?

That is the work now.

Find it. Name it. Package it. Test it. Improve it. Make it travel.

Learn More: Free AEI Webinar

To go deeper, join AEI’s free webinar:

The AI Career Reset: How to Win When Jobs Are Being Rewritten

May 28, 2026 | 11:00 AM PT / 2:00 PM ET

This enterprise workforce panel will cover how agentic AI is reshaping jobs, skills, workflows, and career paths, and what professionals and leaders must do to stay relevant in 2026.

Register at the AEI Events site.

Free AEI Newsletters

Expert insights and updates on Agentic Engineering—delivered straight to your inbox.