- Apr 27

AI Will Replace Developers? The Real Story Is Bigger Than Coding

1. The Question Everyone Is Asking Is Too Small

The most dangerous myth in software engineering is not that AI agents will replace developers.

The more dangerous myth is that they will replace developers evenly.

They will not.

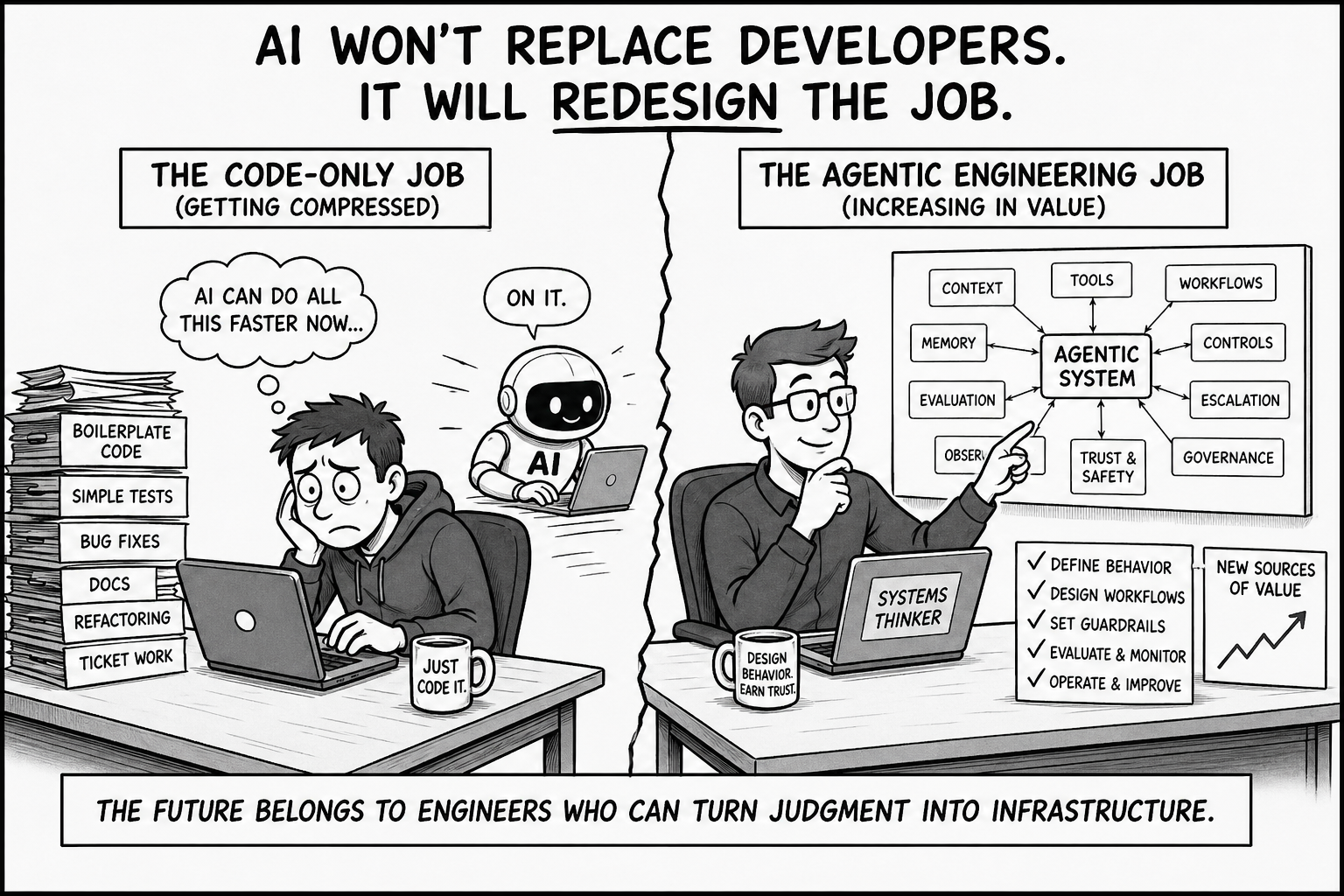

AI agents are already compressing the parts of the job that were easier to describe, decompose, generate, and verify than many people wanted to admit: boilerplate code, routine tests, simple bug fixes, basic documentation, mechanical refactoring, and ticket execution with clear acceptance criteria.

That work looked safe because it looked technical. But much of it was pattern recognition, translation, and repetition: take an instruction, map it to a familiar implementation, adapt the surrounding style, and produce something that can be checked.

That kind of work is already becoming cheaper.

So when people ask, “Will AI replace developers?” they are asking a question that is too small. The real question is sharper:

What happens to the coding job when code production becomes cheap?

That is the shift most people are missing.

AI agents are not just writing code faster. They are changing what the software system is. Modern agentic systems are not made only of code, tests, APIs, and deployments. They also depend on prompts, context, memory, tool permissions, workflows, controls, evaluations, escalation paths, audit trails, and governance routines.

In other words, software engineering is moving beyond the codebase.

That does not make engineering less important. It makes weak engineering more dangerous. When systems can interpret intent, call tools, retrieve context, make recommendations, and trigger actions, the hard problem is no longer only whether the code runs. The hard problem is whether the entire system behaves correctly when the environment is uncertain, the model is imperfect, and the cost of failure is real.

This is why the developer role is being redesigned around a bigger question:

how do we build systems that can act, adapt, fail, recover, explain, escalate, and remain under control?

The future is not about whether AI can write software.

It can.

The future is about who can engineer the systems that software is becoming.

2. AI Is Coming for Code-Only Work First

AI is coming first for code-only work, not because developers are replaceable, but because many developer jobs were designed around tasks that are now easier to automate.

That distinction matters.

A large part of everyday software work follows a familiar pattern: take a reasonably clear ticket, translate it into implementation, write predictable tests, update documentation, fix obvious issues, and move the work across the board. It is real work. It still requires skill. But it is also the kind of work AI agents are increasingly good at because the task is bounded, the patterns are familiar, the context is limited, and the output can be checked.

This is why the replacement debate is often misleading. AI is not coming first for the deepest engineering judgment. It is coming first for work that looks like engineering but is often closer to translation: from requirement to code, from bug report to likely fix, from existing style to similar output, from known pattern to repeatable implementation.

The difference is simple.

Code production turns instructions into implementation.

Engineering judgment decides what the system should do, what it must never do, where it can fail, how it should recover, how it should be tested, and when it should not be trusted.

AI agents are getting much better at the first. They still depend on humans for the second.

That is the career risk now forming inside software engineering. The exposed role is not defined by title or seniority. It is the role whose value is trapped inside generic implementation. The developer waits for tickets, accepts requirements as given, uses AI as faster autocomplete, reviews only the code, ignores the workflow around the code, and treats deployment as the finish line.

That work will get squeezed.

The more valuable engineer moves in the opposite direction. They ask what behavior the system is supposed to create, what context the agent needs, which tools it should be allowed to use, what actions require human approval, how non-deterministic outputs should be evaluated, how drift will be detected, and who owns the failure when the system acts incorrectly.

Those questions used to sit near the edges of software engineering. They belonged to architects, platform teams, security reviewers, QA leads, product managers, risk teams, or governance committees. In agentic systems, they move closer to the center of the developer’s job because the system is no longer just code that executes. It is an environment where models interpret instructions, call tools, use memory, retrieve context, coordinate workflows, and take actions under constraints.

The code-output developer sees AI as a way to finish more tickets.

The agentic engineer sees AI as a runtime actor that must be designed, constrained, observed, tested, governed, and improved.

That is the new career divide.

AI is coming for code-only work first. For many developers, the uncomfortable question is how much of their job was designed around exactly that work.

3. The System Is No Longer Just the Codebase

The old software job was built around a comforting assumption: if you understood the codebase, you understood the system.

That assumption is breaking.

Imagine a release agent that reviews test results, summarizes open risks, checks recent incidents, and recommends whether a build should ship. The code works. The tests run. The dashboard loads. The workflow completes.

But the agent retrieves stale incident data. It misses a policy exception. Its tool permission allows it to mark a risk as resolved without human review. The escalation rule waits too long before involving the release manager. The audit trail is too thin to explain why the recommendation was made.

Nothing in that failure looks like a traditional syntax error. Yet the system behaved incorrectly.

That is the deeper shift.

AI agents expand the software system beyond executable code into a larger field of artifacts that guide, constrain, observe, and govern behavior.

A recent research paper describes this shift as the rise of semi-executable artifacts. The idea is simple: some parts of modern systems are not executed like traditional code, but they still shape what the system does. Prompts, workflows, tool permissions, evaluation rules, escalation paths, policy controls, and operating routines may not compile, but agents and humans interpret them at runtime. They influence behavior as directly as code.

Once you see that, the codebase is no longer the full boundary of engineering.

A prompt is not just text; it is a behavioral instruction.

A workflow is not just process; it is orchestration logic.

A guardrail is not just a safety note; it is a control mechanism.

An escalation path is not just a management procedure; it is part of the runtime architecture.

This is why faster code generation can make the real problem worse. If AI agents produce more code, tests, scripts, prompts, workflows, and configuration, the organization does not automatically become more capable. It may simply accumulate more unverified behavior.

The new bottleneck is not typing software into existence. It is knowing which behavior has been created, whether that behavior is reliable, where it can fail, and how it remains under control when the environment changes.

That is where the coding job begins to change. Developers are no longer responsible only for whether code compiles, passes tests, and deploys. They are increasingly responsible for the larger behavioral system around the code: the context the agent sees, the tools it can use, the actions it may take, the uncertainty it must disclose, the evaluations it must pass, the logs it must produce, and the humans it must involve.

In the agentic era, the system is not just what runs. It is what instructs, constrains, observes, escalates, and governs what runs.

That is the shift from software engineering as code delivery to agentic engineering as behavior design.

4. The Coding Job Is Becoming a System of Jobs

Once the system expands beyond the codebase, the job expands with it.

That is the part most career advice misses. Developers are told to “learn AI,” “use copilots,” or “get better at prompting.” Useful advice, but far too small. Prompting is not the new profession. It is one surface inside a much larger redesign of software work.

This is why a new engineering discipline is needed. At the Agentic Engineering Institute, or AEI, we call that discipline Agentic Engineering:

an AI-native engineering discipline focused on the systematic design, operation, and governance of agentic systems, where cognition, runtime governance, and trust are engineered as first-class system properties.

AEI’s Agentic Engineering Body of Practices, or AEBOP™, breaks this discipline into 26 practice areas. The point is not that every developer must personally master all 26. The point is that the old coding job is now surrounded by new engineering surfaces: use-case selection, team roles, runtime environments, security, observability, protocols, governance, knowledge, context, memory, reasoning, model behavior, orchestration, system design, UX, integration, cognition, trust, AgentOps, quality, SDLC, product management, organization design, maturity, transformation, and leadership.

That scope may sound broad because the system itself has become broad.

In the old model, a developer could often stay close to implementation. Product defined the need. Architects shaped the system. Security reviewed risk. QA tested behavior. Operations handled reliability. Governance arrived later. Leadership worried about adoption.

Agentic systems collapse that distance.

When an AI agent can interpret intent, retrieve context, call tools, use memory, make recommendations, trigger workflows, and take action, the developer can no longer say, “I only wrote the code.” The behavior of the system now comes from the interaction among code, prompts, context, memory, models, tools, workflows, controls, users, and governance rules.

That is why the coding job is becoming a system of jobs.

Some engineers will move toward runtime engineering, designing where agents run, what tools they can access, how permissions work, how communication happens, and how agent behavior is secured, observed, and governed.

Some will move toward cognition engineering, shaping the knowledge, context, memory, reasoning, model choices, and orchestration patterns that determine how agents interpret the world and decide what to do next.

Some will move toward trust engineering, building the evaluations, monitoring, controls, audit trails, escalation paths, and evidence needed to keep agentic systems reliable in production.

Some will become agentic system architects, designing the UX, integrations, workflow boundaries, enterprise interfaces, and control points that turn agents from impressive demos into usable systems.

And some will move into product and operating model design, deciding which agentic capabilities matter, how teams should change, how maturity should progress, and how organizations move from isolated tools to production-grade, enterprise-grade, and regulatory-grade systems.

This is the real job-design shift. AI agents may reduce demand for narrow coding tasks, but they increase demand for people who can connect disciplines that used to live apart: software, data, security, architecture, product, operations, risk, governance, and organizational design.

The future developer will not be judged only by how quickly they can produce code.

They will be judged by whether they can help design a system that behaves correctly when the task is ambiguous, the context is incomplete, the model is uncertain, the workflow crosses organizational boundaries, and the cost of failure is real.

That is not a smaller job.

It is a redesigned job.

The coding career is becoming a system of jobs because the software system itself has become a system of behavior.

5. The New Career Divide

The future will not divide developers into those who use AI and those who do not. That divide is already obsolete. Every serious developer will use AI.

The real divide will be between developers who use AI to produce more code and engineers who use AI to build better systems.

One path keeps the old job intact and adds AI on top of it. The developer asks AI to write code faster, generate tests faster, summarize tickets faster, and move more work through the pipeline. That may create a short-term productivity gain, but it does not change the underlying value of the role. If the job remains mostly generic implementation, AI will continue to compress it.

The other path changes the job itself. The engineer uses AI, but does not stop at output generation. They learn how to define system behavior, design agent workflows, manage context, validate reasoning, govern tool use, evaluate failures, and operate agentic systems after deployment. They understand that the valuable work is no longer just producing artifacts. It is making sure those artifacts work together as a controlled system.

This is the uncomfortable truth for junior developers: the old apprenticeship model is breaking. For years, junior engineers learned through small implementation tasks. Fix the bug. Write the test. Build the feature. Learn the codebase. Develop judgment over time. AI agents now compress many of those tasks. If companies simply automate that work away, they will not only reduce junior roles. They will weaken the pipeline that creates future senior engineers.

The answer is not to preserve every old task. The answer is to redesign apprenticeship. Junior developers still need code literacy, but they also need to learn how to review AI-generated code, trace behavior back to prompts and context, inspect tool calls, write evaluation cases, identify failure modes, and recognize when an agent’s output should not be trusted. The next junior path should build judgment earlier, not later.

Senior engineers face a different test. Many assume AI threatens only beginners. That is a dangerous comfort. AI will also expose senior engineers whose value is mostly historical familiarity, vague architectural opinion, meeting fluency, or review authority that cannot be translated into reusable systems.

In the agentic era, seniority has to become explicit. Expert judgment must show up as architecture, patterns, constraints, evaluations, controls, escalation paths, and operating discipline.

The strongest engineers will be the ones who can turn judgment into infrastructure.

That is the real career move.

Do not merely become a faster coder with AI. Become the person who knows what should be automated, what should be constrained, what should be escalated, what should be measured, and what must remain human.

The code-output developer asks, “Can AI help me finish this ticket?”

The system-behavior engineer asks, “Should this behavior exist, how should it be controlled, and how will we know when it fails?”

That is the new career divide.

AI will replace some parts of developer work. It will make other parts dramatically more important. The winners will not be the people who deny the change, or the people who blindly automate everything. The winners will be the people who understand that software engineering is moving beyond code into the design of autonomous behavior.

The future belongs not to the fastest coder, but to the engineer who can build systems that act, adapt, recover, and remain under control.

That is why the real story is bigger than coding.

6. Your Coding Job Will Not Transform Itself

The market will not wait for every developer, team, or enterprise to catch up.

AI agents are already making some parts of software work cheaper, faster, and less defensible. At the same time, they are making other parts far more valuable: designing agentic systems, engineering trust, operating runtime environments, validating cognition, governing delegated authority, and scaling adoption across real organizations.

That shift will not be solved by using a better coding assistant.

Tool usage is not transformation. Prompting is not a profession. Faster code generation is not the same as agentic engineering capability.

The real move is from using AI to being qualified to engineer the systems where AI agents act.

That is why the Agentic Engineering Institute (AEI) exists: to help professionals and organizations move from traditional software delivery to the disciplined design, operation, and governance of production-grade, enterprise-grade, and regulatory-grade agentic systems.

For professionals, the path is clear: build the discipline, then prove it.

Become a Certified Agentic Engineer if you want to build, test, operate, and improve reliable agentic systems.

Become a Certified Agentic Architect if you want to design agentic platforms, runtime environments, cognition loops, trust envelopes, and enterprise architectures.

Become a Certified Agentic Leader if you want to lead AI-native transformation, redesign teams, govern delegated machine authority, and scale adoption across the enterprise.

For organizations, the challenge is even larger. AI agents will not only change individual jobs. They will change job families, team structures, delivery models, governance routines, quality systems, and leadership expectations.

Companies that treat AI adoption as tool rollout will underprepare their workforce.

Companies that treat it as workforce transformation will build durable capability.

If your organization needs to upskill and reskill employees for the agentic era, partner with AEI. Build a common language. Train your teams. Certify your practitioners. Redesign roles. Establish governance. Move from scattered AI experimentation to disciplined agentic engineering capability.

The code-only job is being compressed.

The agentic engineering profession is being created.

Do not wait for the market to redesign your job for you. Redesign it first.

Join AEI if you want to make that transition as an individual professional. Partner with AEI if your organization needs to prepare its workforce for the next generation of software engineering.

The real story is not that AI will replace developers.

The real story is that software engineering is becoming bigger than coding.

Free AEI Newsletters

Expert insights and updates on Agentic Engineering—delivered straight to your inbox.