- Apr 15

Harness Engineering vs. Agentic Engineering: The Hidden Divide Shaping Enterprise AI

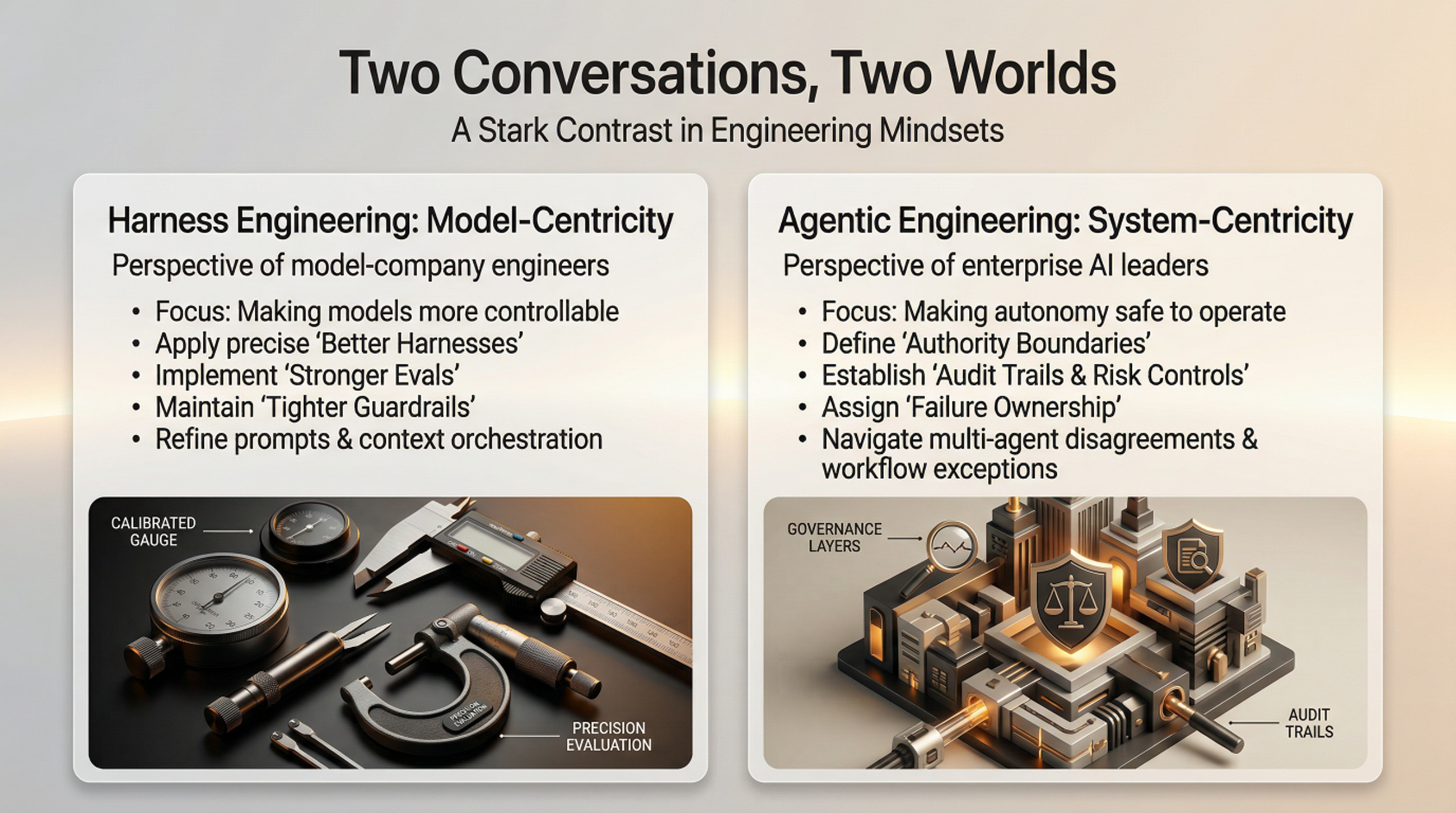

Recently, I found myself in two conversations that could not have sounded more different.

In one, I was speaking with engineers close to the foundation-model world. Their language was precise, technical, and deeply model centric.

“We can fix that with a better harness.”

“Use stronger evals.”

“Improve tool calling.”

“Add tighter guardrails.”

“Give the model better context.”

A few days later, I had a very different conversation with enterprise AI leaders trying to put agents into real workflows.

Their questions were not about the model first. They asked:

“Who is this agent allowed to act for?”

“What happens when it makes a decision that affects a customer?”

“How do we audit its actions six months later?”

“What if two agents disagree?”

“Who owns the failure when the agent follows the prompt but violates the business process?”

That was the moment the divide became impossible to miss.

The model-company engineers were trying to make the model easier to control.

The enterprise engineers were trying to make autonomy safe to operate.

Both sides were smart. Both sides were serious. But they were arguing from different worlds.

One side was talking about Harness Engineering.

The other side was searching for Agentic Engineering.

And this hidden divide may define who wins, and who stalls, in the next decade of enterprise AI.

Harness Engineering Starts Where Model Companies Live

To understand the divide, we first need to define Harness Engineering.

Harness Engineering is the discipline of designing, building, evaluating, and operating the model-adjacent control layer that turns a general-purpose AI model into a usable task-performing component. It includes prompt and context orchestration, retrieval, memory, tool calling, guardrails, evaluation suites, telemetry, sandboxing, feedback loops, and human review points.

The idea did not appear from nowhere. In traditional software engineering, a test harness helped developers run code under controlled conditions. In machine learning, evaluation harnesses helped teams benchmark models, compare performance, and detect regressions. In the early LLM era, the “harness” expanded from testing into operation: prompts, RAG pipelines, tool integrations, policy checks, observability, and runtime controls became part of the system surrounding the model.

Then AI agents changed the stakes.

Once models began using tools, writing code, calling APIs, retrieving data, taking actions, and operating across workflows, the harness became more than a testing layer. It became the control environment around model behavior.

This is why Harness Engineering is so important for AI model companies. A more capable model is not automatically a usable product. The model must follow instructions, use tools correctly, retrieve the right context, avoid unsafe actions, produce structured outputs, pass evals, operate within latency and cost limits, and behave consistently enough for developers to trust it.

But here is the catch: every model needs a different harness.

A frontier reasoning model, a coding model, a multimodal model, a small enterprise model, and a domain-tuned model do not fail in the same way. They have different reasoning patterns, context limits, tool-use behaviors, latency profiles, cost structures, safety characteristics, and failure modes. The prompt strategy may differ. The eval suite may differ. The tool architecture may differ. The memory design may differ. The guardrail strategy may differ.

So for AI model companies, Harness Engineering is the natural game. Their world begins at the model boundary, and their job is to make that model more controllable, more useful, and easier to integrate.

That is valuable work.

But it is still model-centric.

And that is exactly where the enterprise problem begins.

Agentic Engineering Starts Where Enterprise Reality Begins

If Harness Engineering starts at the model boundary, Agentic Engineering starts where enterprise reality begins.

Agentic Engineering Institute (AEI) formally defines Agentic Engineering as “an AI-native engineering discipline for enterprise AI, focused on the systematic design, operation, and governance of agentic systems, where cognition, runtime governance, and trust are engineered as first-class system properties.”

That definition matters because agentic systems are not just smarter applications. They reason, plan, decide, use tools, interact with other agents, and act with some level of delegated authority. Once AI systems operate this way, the hard problem is no longer only intelligence. The hard problem becomes control.

This is where the model-centric view starts to break down. Enterprises do not deploy AI into clean labs. They deploy it into messy operating environments filled with incomplete data, legacy systems, security boundaries, regulatory obligations, customer impact, workflow exceptions, and human accountability.

An enterprise agent may retrieve customer data, update a workflow, trigger a transaction, review a contract, generate code, escalate an exception, or coordinate with another agent. In each case, the question is not simply whether the model can perform the task. The real question is whether the enterprise has engineered the system that allows the agent to act safely, reliably, and accountably.

That system includes the model, but it also includes authority design, runtime governance, intervention mechanisms, audit trails, security controls, observability, lifecycle assurance, operating ownership, and human override.

This is why Agentic Engineering is fundamentally a systems thinking and systems engineering discipline. It asks which model, or combination of models, should be used for which task, under which authority, with which controls, in which workflow, against which business outcome.

Harness Engineering makes the model more controllable.

Agentic Engineering makes autonomy operational.

And in enterprise AI, operational autonomy is where both the real value and the real risk begin.

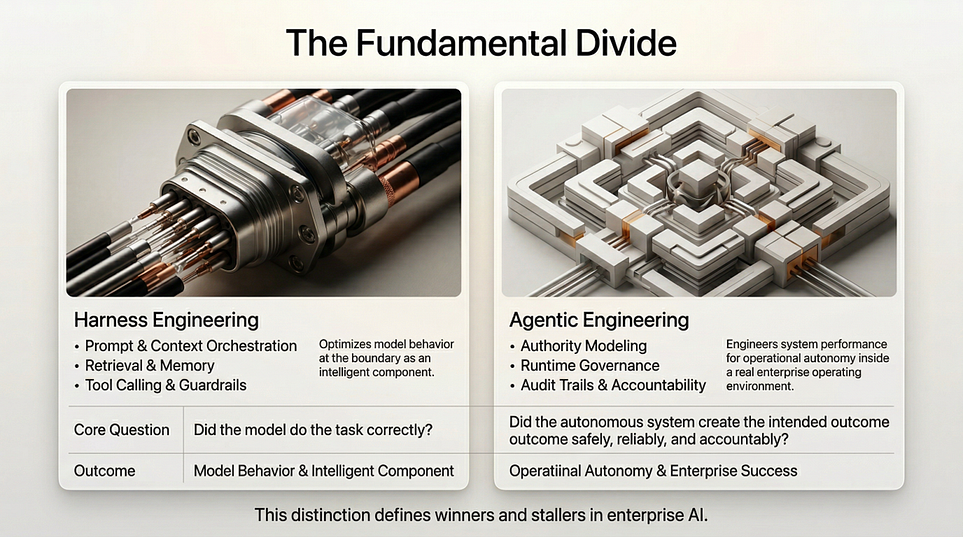

The Hidden Divide: Model Behavior vs. Enterprise Outcomes

The cleanest way to understand the difference is this:

Harness Engineering is about improving model behavior. Agentic Engineering is about improving enterprise outcomes through engineered autonomy.

That sounds like a small distinction. It is not.

A model company naturally measures success by how well a model can follow instructions, use tools, pass evals, avoid unsafe outputs, and produce reliable responses across developer scenarios. From that perspective, the harness is the right object of engineering. If the model behaves better, the product becomes more useful.

An enterprise measures success differently. The question is not whether the model behaved well in isolation. The question is whether the full system improved cycle time, reduced cost, increased accuracy, lowered risk, protected customers, satisfied governance requirements, and created measurable business value inside a real operating environment.

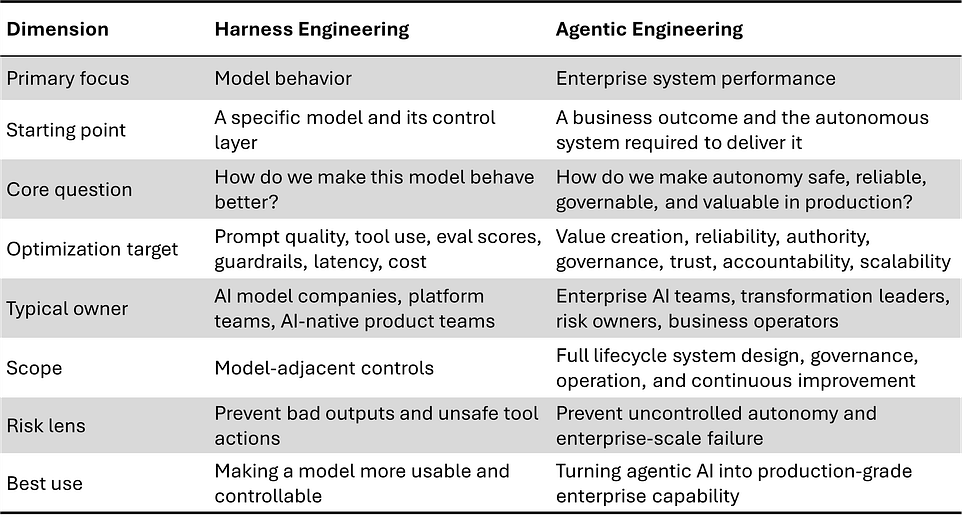

Table: The Comparison of Harness Engineering and Agentic Engineering

This is the hidden divide.

Harness Engineering asks, “Did the model do the task correctly?”

Agentic Engineering asks, “Did the autonomous system create the intended outcome safely, reliably, and accountably?”

One optimizes the behavior of an intelligent component.

The other engineers the performance of an intelligent enterprise system.

That is why Harness Engineering matters, but Agentic Engineering wins in the enterprise.

The Trap: Enterprises Are Borrowing the Wrong Playbook

This is where many enterprise AI programs quietly go off course.

They copy the playbook of AI model companies.

They benchmark models. They refine prompts. They add RAG. They connect tools. They build evals. They add guardrails. They create dashboards. They run pilots. Every step looks rational. Every artifact feels like progress. Every demo gets a little better.

But the enterprise still does not have a production-grade agentic system.

Why?

Because the model-company playbook is designed to make models more usable. The enterprise playbook must make autonomy operational.

Those are different problems.

A better prompt does not define what authority the agent has. A stronger eval does not decide which actions require human approval. A guardrail does not redesign a broken workflow. A dashboard does not create accountability. A RAG pipeline does not solve data ownership. A tool integration does not establish runtime governance.

This is why so many AI initiatives feel promising in the lab but fragile in the business.

The model works. The harness works. The prototype works.

But the enterprise system around it is still under-engineered.

And once the agent touches real customers, regulated decisions, financial transactions, employee workflows, or production systems, that gap becomes impossible to hide.

Why Harness Engineering Matters, But Cannot Stand Alone

Harness Engineering is necessary. No serious agentic system should operate without strong prompts, context design, tool controls, evals, guardrails, telemetry, sandboxing, feedback loops, and human review points.

But it should not be mistaken for the whole discipline.

Harness Engineering solves the model-control problem. It helps a model follow instructions, use tools, avoid unsafe outputs, pass evals, and behave more reliably inside a defined environment. That is essential engineering work.

But enterprises face a larger problem: the autonomy-control problem.

A model can answer correctly and still act in the wrong business context. A tool call can succeed and still violate a regulated workflow. An eval can pass and still miss a production failure mode. A dashboard can show activity and still fail to establish accountability. A guardrail can block unsafe language and still fail to control unsafe authority.

That is the boundary.

Harness Engineering improves the control layer around the model. Agentic Engineering designs the operating system around autonomy.

This is why Harness Engineering belongs inside Agentic Engineering, not beside it or above it. It is one of the required practice areas, but not the full architecture. Agentic Engineering extends beyond model-adjacent controls into requirements, authority modeling, runtime governance, security, trust fabric, observability, multi-agent coordination, human-agent collaboration, lifecycle assurance, operating model design, and continuous improvement.

For enterprises, this hierarchy is decisive.

If enterprises treat Harness Engineering as the whole discipline, they may build better model wrappers while still failing to create production-grade AI capability. But when enterprises place Harness Engineering inside Agentic Engineering, the harness becomes part of a larger system designed for reliability, governance, accountability, and business value.

Harness Engineering helps the model perform.

Agentic Engineering helps the enterprise win.

The Real Moat Is the Engineered System

For years, enterprise AI strategy has been dominated by a simple assumption: better models will create better outcomes.

That assumption is becoming dangerous.

Models will continue to improve. Harnesses will become more sophisticated. Tool ecosystems will mature. Guardrail patterns will spread. Evaluation methods will become standard practice. Much of this will be available to everyone.

That means the durable advantage will not come from access to a model alone. It will not come from a clever prompt. It will not even come from a strong harness if competitors can build or buy similar controls.

The real moat will be the engineered system around autonomy.

That system includes the enterprise’s ability to select the right model or combination of models, assign the right authority, enforce the right controls, integrate with the right workflows, monitor the right signals, escalate the right decisions, learn from the right failures, and continuously improve performance in production.

This is where enterprises can create durable advantage. Not by chasing every model release, but by building the discipline to turn agentic AI into repeatable operating capability.

A competitor can access the same model.

A competitor can copy a harness pattern.

But it is much harder to copy an enterprise’s engineered system: its workflows, governance routines, data context, authority structures, evaluation discipline, feedback loops, operating model, and institutional learning.

That is the shift from model-centric AI to system-centric AI.

And that is why Agentic Engineering matters.

In the agentic era, the winning question is no longer, “Which model is best?”

The winning question is, “What system are we engineering that others cannot easily replicate?”

The Enterprise AI Winners Will Play a Different Game

The next generation of enterprise AI winners will not be the organizations with the longest list of AI tools, the most pilots, or the fastest access to the newest model.

Those advantages will be temporary.

The real winners will be the enterprises that build the capability to engineer autonomy as a repeatable operating system. They will know where AI agents should act, where they should not, which models should be used for which tasks, how different models should work together, what authority each agent should have, what controls must be enforced at runtime, when humans must intervene, how failures are detected, and how the system improves over time.

This is very different from buying an AI platform and hoping transformation follows. A platform can provide infrastructure. A model can provide intelligence. A harness can improve control. But none of them automatically gives an enterprise the discipline to redesign work, govern autonomy, manage risk, and scale value across business units.

That discipline must be engineered.

This is why the old AI leadership question is no longer enough:

Which model should we use?

The better question is:

What system must we engineer so autonomous AI can create value safely, reliably, and accountably in production?

That system may include multiple models, multiple harnesses, multiple agents, multiple governance layers, multiple reliability tiers, and multiple human decision points. It may need deterministic controls for regulated processes, runtime governance for high-risk actions, human escalation for uncertain cases, and continuous evaluation as business conditions change.

This is the different game enterprise AI winners will play.

They will not simply adopt agentic AI.

They will engineer it.

Harness the Model. Engineer the System.

Harness Engineering will continue to matter. Every serious agentic system needs strong prompts, context design, tool integration, evals, guardrails, telemetry, sandboxing, and feedback loops.

But for enterprises, the bigger challenge is no longer how to wrap one model better. The bigger challenge is how to engineer autonomy as a production-grade capability.

That means designing systems where models are selected for the right tasks, authority is explicitly defined, governance runs at runtime, failures are observable, humans remain accountable, and value is measured beyond the demo.

This is the hidden divide shaping enterprise AI.

AI model companies will keep improving the harness.

Enterprise winners will engineer the system.

That is why Agentic Engineering matters now. It gives organizations the discipline to move beyond pilots, prompts, wrappers, and demos toward agentic systems that are safe, reliable, governable, and valuable in production.

At AEI, this is the work we are advancing: defining the standards, practices, operating models, and professional discipline for production-grade Agentic Engineering.

For individuals: join AEI to master the discipline that will shape the next era of AI careers.

For enterprises: partner with AEI to build the engineering capability required to move from AI experimentation to durable AI advantage.

Harness the model. Engineer the system. That is how enterprises win with AI.

Free AEI Newsletters

Expert insights and updates on Agentic Engineering—delivered straight to your inbox.