- Thursday

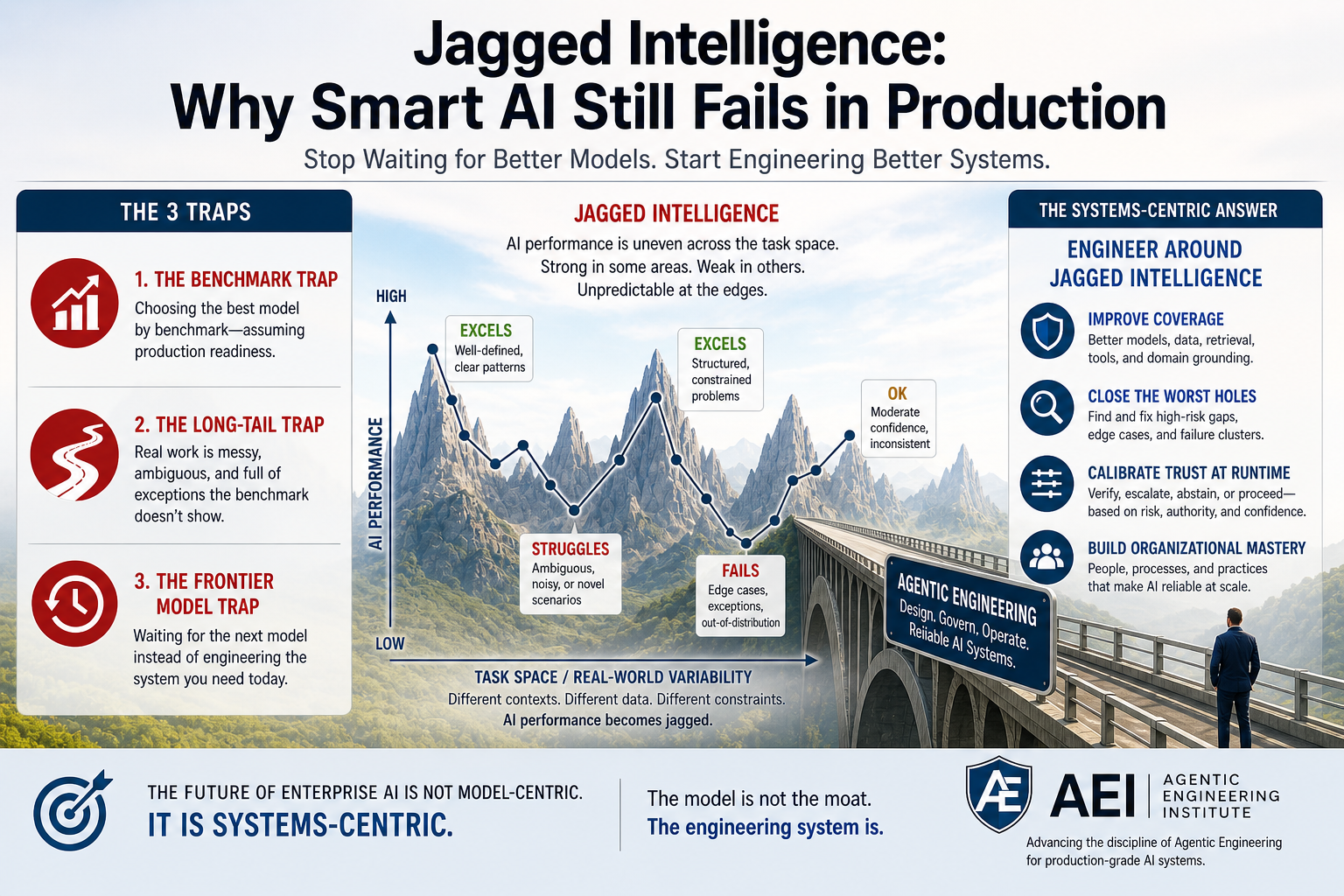

Jagged Intelligence: Why Smart AI Still Fails in Production

A Fortune 500 AI leader consulted with me recently about a problem his team could not explain away.

Their AI system could solve complex tasks that impressed senior executives. It could analyze dense documents, generate high-quality recommendations, and handle sophisticated workflows that looked impossible only a year ago.

Then it would fail on something simple.

Not always. Not predictably. Not in a way that was easy to reproduce.

That was the most frustrating part.

The team was not dealing with a bad model. They were dealing with uneven intelligence. The AI could look brilliant in one task, then fragile in a nearby task that appeared easier to a human. It could perform well in a pilot, then break trust when the context changed slightly, the data became messy, or the workflow moved closer to real production.

This is where many enterprise AI programs get stuck.

The demo proves the model can be impressive.

Production asks a much harder question: can the system be reliable when the task changes, the stakes rise, and the cost of being wrong becomes real?

That is the hidden failure pattern behind many AI initiatives today.

The issue is not that AI is weak.

The issue is that enterprises keep mistaking model intelligence for system reliability.

The Name for This Problem: Jagged Intelligence

The Fortune 500 leader’s frustration came from a very human assumption:

if a system can handle the hard task, it should be able to handle the easier nearby task.

That assumption works reasonably well with people. A senior analyst who can synthesize a complex market report usually will not fail to identify the customer name on page one. A strong software engineer who can redesign a distributed architecture usually will not misunderstand a basic function signature. Human competence is not perfectly consistent, but it usually has a shape we recognize. We expect capability to degrade gradually, not collapse unpredictably.

AI breaks that intuition.

Jagged intelligence is the uneven, discontinuous, and often opaque performance pattern in which an AI system can demonstrate high competence on one task while failing on a nearby task that appears similar, simpler, or logically adjacent to a human user.

That definition matters because it shifts the discussion from “AI makes mistakes” to something more precise. Every system makes mistakes. The distinctive problem with jagged intelligence is that the mistake pattern is hard for humans and organizations to predict. A model can analyze a dense document, produce a polished recommendation, and appear strategically capable. Then, with a small change in wording, context, data format, or workflow condition, it can miss an obvious constraint, misread a basic fact, or produce a confident answer that no trained person would trust.

The recent working paper on Artificial Jagged Intelligence formalizes this same pattern: generative AI systems often show highly uneven performance across tasks that appear “nearby,” where small changes in wording or context can move the model from excellent to confidently wrong. More importantly, it frames adoption as an information problem. Users need to know local reliability, but they typically see only broad quality signals such as demos, benchmarks, vendor claims, or pilot success stories.

For enterprise AI, this is the critical turn. The question is no longer whether the model is powerful in general. The question is whether the organization can know where the model is reliable enough to use, where it needs verification, where it must escalate, and where it should not be allowed to act at all.

A benchmark tells you the model can perform well somewhere. Production requires confidence that the system can perform reliably here: in this workflow, with this data, for this user, under this policy, at this risk level, with this degree of authority.

That is why jagged intelligence creates so much friction inside large organizations. One team may experience AI as transformative because its tasks sit inside a pocket of competence. Another team may reject the same system because its workflow lands in a hole of failure. Both teams may be right. The contradiction is not necessarily cultural resistance, poor training, or lack of imagination. It may be structural: they are operating in different regions of the same jagged capability landscape.

The enterprise problem is not just whether the model is smart.

The enterprise problem is whether the organization can map, govern, and operationalize that intelligence safely.

The Benchmark Trap

This is why so many enterprise AI teams get surprised after choosing a frontier model.

They do the responsible thing. They compare models across reasoning benchmarks, coding benchmarks, math benchmarks, long-context evaluations, multimodal tests, latency, cost, security posture, and vendor scorecards. They select the model that looks strongest on paper. The decision feels rational because the model really is impressive.

Then the model meets the reality.

It meets messy data, inconsistent document formats, legacy workflows, specialized jargon, partial context, conflicting policies, edge cases, and users who do not behave like benchmark prompts. Suddenly, the “best” model does not feel best everywhere. It performs beautifully in one workflow, unevenly in another, and unpredictably in the cases that matter most.

That is not because benchmarks are useless. Benchmarks are useful for comparing broad model capability. They are weak proxies for production readiness.

A benchmark can tell you that a model is strong in general. It cannot tell you whether that model will handle your claims workflow, your compliance review, your customer escalation path, your internal codebase, your procurement exceptions, or your regulated decision process with enough reliability to delegate real work.

This is where model-centric AI creates false confidence. It treats model selection as the main strategic decision. Pick the best model, tune the prompt, add retrieval, run a pilot, and scale. But in production, the hard question is not which model wins the leaderboard. The hard question is whether the system built around the model can control behavior when the task becomes ambiguous, consequential, or operationally messy.

A simple manufacturing analogy makes the point. A low average defect rate means very little if the defects concentrate in safety-critical components. The issue is not just how often failure happens. It is where failure happens, how costly it is, whether it can be detected, and whether the system can prevent it from reaching the customer.

Enterprise AI works the same way.

The strongest frontier model can still create production risk if the enterprise does not know where to verify, where to restrict, where to escalate, and where not to delegate at all.

The benchmark tells you which engine is powerful.

Production tells you whether you built a vehicle that can be driven safely.

The Long-Tail Trap

The surprising part is not that AI fails in production. The surprising part is how often production seems to find the exact places where the model is weakest.

That is the long-tail trap.

Enterprise workflows are not evenly distributed across clean, simple, well-defined tasks. The easiest work is usually already automated, scripted, templated, outsourced, or handled by existing systems. What remains is the long tail: ambiguous requests, incomplete information, unusual customer cases, inconsistent documents, policy exceptions, legacy constraints, undocumented dependencies, and decisions that require judgment.

So when enterprises deploy AI, they are often not giving it the average task. They are giving it the task that escaped the average process.

That distinction matters. A benchmark may test broad capability. A pilot may test curated examples. But production traffic naturally drifts toward work that is confusing, delayed, disputed, costly, politically sensitive, or operationally inconvenient. In other words, production does not just reveal the gaps in AI capability. It routes work into them.

The Artificial Jagged Intelligence paper explains this dynamic through a useful bridge analogy. If AI knowledge is like a bridge supported by pylons, short spans are safer and long spans are riskier. But a person walking across the bridge spends more time on the long spans because those spans occupy more of the bridge. The user’s experienced risk is therefore higher than the simple average suggests. This is the inspection paradox:

users are statistically overexposed to the weak regions of a jagged capability landscape.

The same pattern shows up across enterprise AI. A customer-support assistant may handle routine questions well, but the long tail includes angry customers, missing account details, refund disputes, legal sensitivity, unclear intent, and policy exceptions. A coding assistant may perform well on isolated examples, but the long tail includes undocumented dependencies, inconsistent patterns, old architectural decisions, brittle integrations, and security constraints. A compliance assistant may summarize policy well, but the long tail includes jurisdictional ambiguity, contradictory evidence, business pressure, and exceptions no one documented cleanly.

This is why pilots can mislead. A pilot often asks, “Can AI perform selected tasks?”

Production asks,

“Can AI survive the long-tail distribution of the business?”

That question changes everything.

People do not remember AI like a benchmark suite. They remember the case that mattered. One bad answer in a brainstorming session is tolerable. One bad answer in a customer escalation, legal review, financial approval, security workflow, or executive decision can redefine the organization’s confidence in the entire system.

The lesson is not that AI should avoid hard work. The lesson is that long-tail work requires a different architecture. If production naturally routes AI toward ambiguity, exceptions, and consequences, then production-grade AI cannot rely on broad model confidence alone.

It must be engineered for the long tail.

The Frontier Model Trap

When enterprise AI teams run into jagged performance, the most tempting response is also the most dangerous one: “Let’s wait for the next model.”

It sounds rational. Frontier models are improving quickly. The next release may reason better, code better, handle longer context, use tools more effectively, and perform better across standard benchmarks. In many cases, waiting does improve the pilot. A task that failed six months ago may work today. A workflow that looked impossible last year may now look feasible.

That is exactly why the trap is so hard to see.

The next model usually does make the system smarter. But it does not automatically make the system production-grade. It may reduce some failures, but it does not tell the enterprise where the remaining failures are. It may handle more tasks, but it does not define when the system should verify, escalate, abstain, or stop. It may generate more convincing answers, but it does not create authority boundaries, audit evidence, runtime policies, or operational accountability.

So the team waits, upgrades, tests again, and sees progress. Then production exposes a new edge.

The customer case is more ambiguous than the test set. The internal policy has exceptions. The data is incomplete. The tool call changes state. The codebase has undocumented dependencies. The workflow crosses a compliance boundary. The user asks a question that looks simple but sits outside the model’s reliable region.

The model improved. The production problem moved.

This is the frontier model trap: using model progress as a substitute for system design.

The Artificial Jagged Intelligence paper explains why this pattern persists. Scaling increases coverage across the task space and improves average quality, but it does not eliminate jaggedness. In the bridge analogy, adding more pylons shortens the risky spans. That helps. But unless users can identify which spans are safe, which are fragile, and which require another path, blind adoption remains risky.

This is especially dangerous because better models earn more trust. A weak model is easy to contain because everyone doubts it. A strong model is different. People start relying on it. Teams expand the scope. Leaders push for automation. Agents get connected to tools, workflows, memory, APIs, records, and decisions. The model’s intelligence increases, but so does the cost of being wrong.

That is why “wait for the next model” is not a production strategy.

The next frontier model may be dramatically better, but it still will not design your escalation paths, define your decision rights, enforce your runtime policies, monitor your tool calls, govern your memory, produce audit evidence, or determine which actions require human approval. Those are not model upgrades. They are system responsibilities.

Waiting can improve capability.

It cannot replace engineering.

The Systems-Centric Answer: Engineer Around Jagged Intelligence

The three traps have the same root cause.

The benchmark trap treats model ranking as production evidence.

The long-tail trap assumes real work will behave like clean evaluation tasks.

The frontier model trap uses model progress to postpone system design.

All three come from the same model-centric assumption: if the model becomes strong enough, the production problem will mostly solve itself.

That assumption is wrong.

Model-centric AI asks, “Which model is best?”

Systems-centric AI asks, “What must be engineered around the model so this workflow can run reliably, safely, observably, and under the right authority?”

That shift changes everything. A model can generate an answer. A production AI system must decide whether the context is sufficient, whether the evidence is trustworthy, whether the action is authorized, whether the risk requires verification, whether the system should proceed, and whether the decision can be audited later.

Those are not model-selection problems. They are system-design problems.

This is the deeper lesson of jagged intelligence. Because AI reliability is local, uneven, and often opaque, enterprises cannot depend on broad model capability alone. They need systems that can discover where AI works, constrain where it is fragile, and adapt as the reliability landscape changes. The Artificial Jagged Intelligence paper makes this point directly: value depends not only on average quality, but on whether users can locate reliable regions and avoid unreliable ones.

This is where Agentic Engineering becomes essential.

Agentic Engineering is the systems-centric discipline for designing, operating, and governing AI systems that reason, use tools, interact with context, and act with delegated authority. It treats runtime governance, trust boundaries, observability, evaluation, escalation, authority, memory, tool use, and auditability as first-class engineering concerns.

In practical terms, systems-centric AI does four things.

It improves capability coverage through better models, retrieval, tools, and domain grounding.

It closes the worst holes by identifying recurring failure clusters and long-tail exceptions.

It calibrates trust at runtime by deciding when to proceed, verify, escalate, abstain, or block action.

And it builds organizational mastery so teams learn where AI can be safely delegated and where human judgment remains essential.

That is the role of the Agentic Engineering Institute: to define and advance the discipline required for production-grade agentic AI. AEI’s standards, training, certification, and partner programs help professionals and organizations move beyond model-centric experimentation toward governed, reliable, enterprise-grade AI systems.

The next phase of enterprise AI will not be won by organizations that merely adopt the most powerful model.

It will be won by organizations that can engineer reliable systems around jagged intelligence.

The New AI Moat Is System Reliability

The first phase of generative AI was about access to powerful models.

The next phase will be about engineering reliable systems around them.

Jagged intelligence exposes the gap. A model can be brilliant and still uneven. A pilot can be impressive and still fragile. An enterprise can choose the best frontier model and still fail if it cannot govern, verify, constrain, and operationalize that intelligence in real workflows.

That is why the next AI moat is not model access.

It is system reliability.

This is the shift from model-centric AI to systems-centric Agentic Engineering.

AI is moving from assistants to agents, from content generation to delegated action, and from demos to enterprise operations.

The winners will not be the organizations with the newest model.

They will be the organizations that can engineer AI systems they can trust.

Because in production, the model is not the moat.

The engineering system is.

Join AEI. Partner with AEI. Lead the shift.

Free AEI Newsletters

Expert insights and updates on Agentic Engineering—delivered straight to your inbox.