- Apr 10

Most People Aren’t Learning AI. They’re Paying an Anxiety Tax.

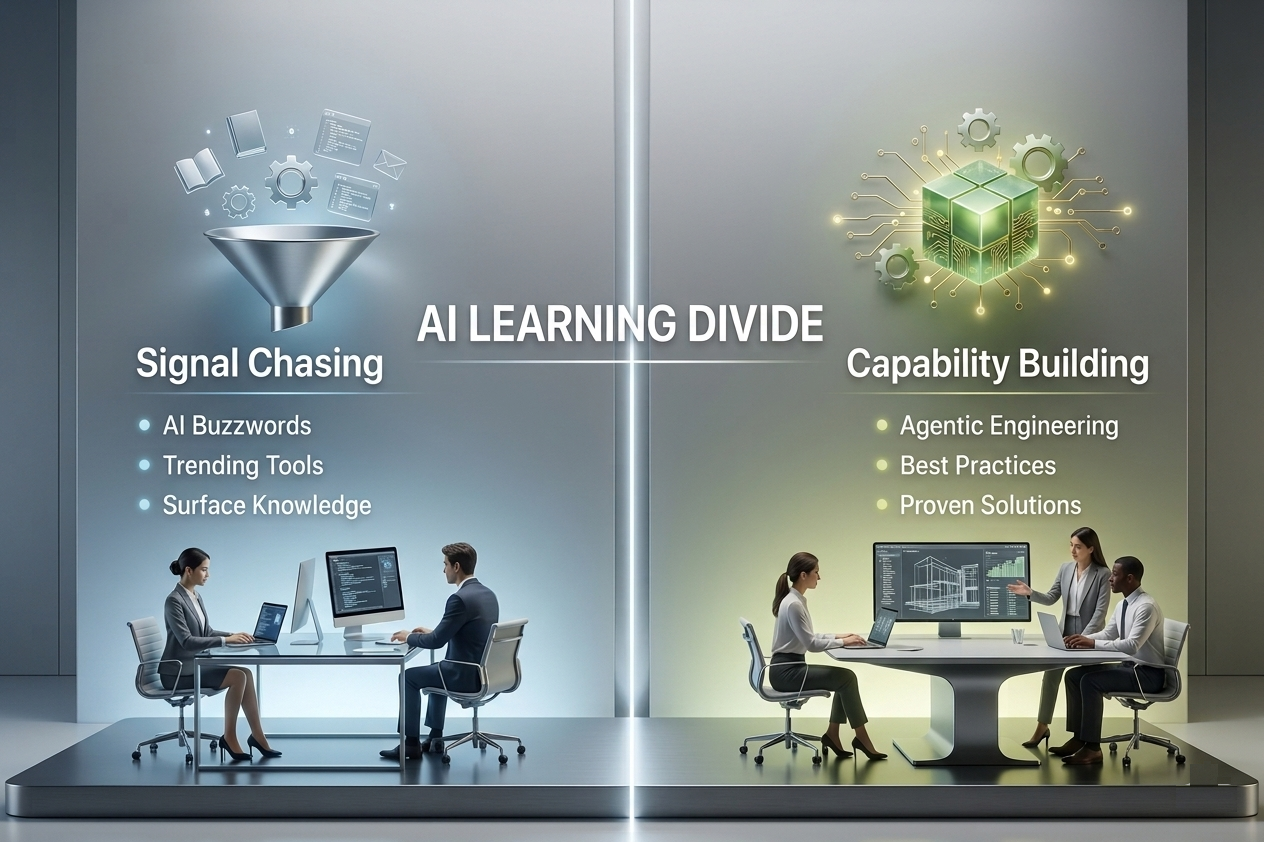

In the AI age, two learning camps are emerging: one buys signal, the other builds capability.

Late on a Sunday, an accomplished professional asks a question they would never say out loud in a meeting: Am I actually behind in AI, or just behind in performing that I’m not?

That question is not really about learning. It is about fear.

Fear that years of hard-won judgment are being quietly repriced by a market that now rewards AI fluency more visibly than proven capability. Fear that deep expertise in building businesses, leading teams, running operations, designing products, or shipping systems suddenly counts for less than being able to speak confidently about the model, tool, or framework of the week. Fear that being excellent at your job is no longer enough if you cannot also perform relevance on demand.

So another tab opens. Another explainer gets bookmarked. Another article gets skimmed, highlighted, and half-forgotten. Not because people are lazy. Not because they are unserious. Not because they are anti-technology. Because they are tired. Tired of hearing that everything is changing. Tired of watching every conversation collapse into the same vague command: learn AI. Tired of the quiet humiliation of being highly competent and still feeling one model release away from irrelevance.

This is the part the market keeps getting wrong. Most people are not learning AI because they are captivated by transformer architectures or thrilled to spend their weekend comparing agent frameworks. Many are learning AI because the labor market has started to equate visibility with viability.

That pressure is splitting AI learning into two very different camps, and confusing them is becoming one of the most expensive mistakes in the AI era.

The first camp buys currency. The second builds capability.

The first camp is learning AI for currency. These are people trying to remain legible to the market. They want the keyword on the resume, the proof point on LinkedIn, the confidence that they will not be the one left out when budgets tighten and expectations rise. Their learning is not fake. But it is often defensive. It is learning as insurance.

The second camp is learning AI for capability. These are people trying to redesign work. They care less about whether they can name the latest framework and more about whether they can reduce cycle time, improve decision quality, govern automation, redesign a workflow, or create measurable value under real operating constraints. Their learning is not about appearing current. It is about becoming effective.

From the outside, the two camps can look identical. Both attend webinars. Both test tools. Both say they are “upskilling”. But inwardly they are doing very different things. One is trying to quiet fear. The other is trying to build power.

That distinction matters because AI adoption is spreading much faster than AI maturity. Stanford’s 2025 AI Index reports that 78% of organizations said they used AI in 2024, up from 55% the year before. Stack Overflow’s 2025 Developer Survey found that 84% of respondents are using or planning to use AI tools in their development process, and 51% of professional developers say they use them daily. The tools are now mainstream. Adoption is no longer the interesting part of the story.

What is more interesting, and more uncomfortable, is that widespread adoption has not produced widespread maturity. McKinsey reports that 92% of executives expect to increase AI spending over the next three years, yet only 1% describe their organizations as mature in AI deployment. In that same research, McKinsey found that fewer than one-third of organizations follow most of the practices associated with successful scaling, and only 21% have fundamentally redesigned at least some workflows. That is the real story of the moment: high enthusiasm, high activity, and still very limited institutional capability.

The real divide is durable skills versus perishable skills

The easiest way to understand this problem is through the lens of perishable and durable skills.

Perishable AI skills are tied to the interface of the moment: a framework API, a prompt pattern, a tool-specific workflow, a vendor-specific stack, a model quirk, a benchmark trend. These skills can absolutely be useful. Sometimes they are urgently useful. But they decay quickly because the ground under them keeps moving.

Durable AI skills are different. They include problem framing, workflow decomposition, evaluation design, system architecture, governance judgment, reliability thinking, change management, domain expertise, and the ability to distinguish a compelling demo from a trustworthy production system. These skills are slower to build and much harder to fake, but they survive vendor churn and technology cycles.

A simple analogy helps. Perishable skills are the produce aisle. Fresh, visible, and necessary, but quick to spoil. Durable skills are the kitchen. Timing, sequencing, heat, judgment, taste. The produce changes every week. The craft of cooking is what lets you make something that actually works.

This is why so many experienced professionals feel an anxiety they cannot quite name. They know, often intuitively, that the market is rewarding fresh produce while undervaluing the kitchen. They are being asked whether they learned the latest tool when the deeper value they bring lies in understanding where systems break, how workflows fail, what humans will ignore, and which design choices create downstream fragility.

The half-life of AI knowledge is collapsing

The half-life problem makes all of this worse.

Harvard Business Review notes that the average half-life of a skill is now often less than five years, and in some technical fields it can be roughly half that. BCG makes a similar point, arguing that the average half-life of skills is now under five years and even shorter in some tech domains. In other words, the surface layer of what you know is decaying much faster than it used to.

That has enormous implications for AI learning. The tutorial you watched last quarter may already be less relevant. The “best practice” you memorized may turn out to be a temporary workaround. The shiny framework everyone was discussing six months ago may be abstracted away, replaced, or folded into a larger platform. Perishable knowledge now expires at machine speed.

But the half-life of durable judgment is much longer. Knowing how to evaluate a system against a real business objective has a long half-life. Knowing how to design for failure has a long half-life. Knowing how to govern delegated machine action in production has a long half-life. Knowing how to connect AI outputs to accountability, workflow redesign, and measurable value has a long half-life.

That is the trap. Many organizations see rising AI learning activity and assume capability is increasing. Often the opposite is happening. The company is increasing its exposure to information while doing almost nothing to improve its ability to generate reliable value from that information.

The economics reward churn, not mastery

Once you see the half-life problem, the economics become obvious.

The market makes more money from perishable learning than durable mastery. It can sell you a course on this quarter’s framework, a prompt guide for this month’s model, a workshop on copilots, an “AI for leaders” bootcamp, and then another one when the stack shifts again. Perishable learning creates repeat demand because the knowledge keeps expiring.

Durable mastery does not fit the business model so neatly. You cannot compress sound architectural judgment into a weekend. You cannot buy operational wisdom at 40% off. You cannot acquire production instincts from a certificate. Those capabilities come from building, testing, failing, governing, and improving under real conditions.

This is why so much AI learning feels strangely unsatisfying. The market keeps selling fresh produce to people who actually need a better kitchen.

The same pattern shows up in online learning more broadly. Research reviewed in Frontiers found that MOOC completion rates commonly fall between 5% and 40%, while another review found median completion rates often hover around 10% to 13%. Access is easy. Enrollment is easy. The hard part is not registering interest. The hard part is turning exposure into capability and capability into value.

This is why AI slop feels so overwhelming

Now layer AI slop on top of all of this.

AI slop is not just bad generated content. It is the broader fog machine of the moment: recycled summaries dressed up as insight, framework diagrams with no operating reality behind them, benchmark commentary without deployment context, synthetic thought leadership assembled faster than thought itself. In a world where language is cheap, language stops being proof.

That is why so many people feel overwhelmed. The problem is not lack of access. It is the collapse of signal.

When knowledge becomes cheap, discernment becomes expensive.

Real wisdom has a different texture from noise. Noise tells you what is possible. Wisdom tells you what breaks. Noise is fascinated by tools. Wisdom is obsessed with outcomes. Noise celebrates speed. Wisdom names controls, tradeoffs, incentives, and failure modes. Noise can explain the happy path. Wisdom knows what happens at 2 a.m. when the model is wrong, the process is weak, and the human escalation path was never truly designed.

That is the test that matters now. Not who can talk the most about AI, but who can separate performance from capability, novelty from judgment, and activity from real-world value creation.

In the AI age, practice is the moat

For years, expertise was partly protected by access: access to information, access to methods, access to synthesis, access to technical language. AI shattered that model. Now anyone can generate a decent summary, produce a serviceable explainer, or sound superficially informed in minutes.

So the moat has moved.

Knowing is no longer the moat. Practicing is.

Practice is where consequences live. Practice is where architecture meets latency, governance, regulation, human behavior, legacy systems, bad incentives, and operational reality. Practice is where a clever demo either matures into enterprise value or collapses into one more expensive illusion.

That is why, in the AI age, knowledge is cheap but practice matters much more for real-world value creation. Summaries are abundant. Tutorials are infinite. The language of expertise is everywhere. But reliable execution is still rare, and earned judgment is rarer still.

The organizations that win will not be the ones that consumed the most AI content. They will be the ones that built the strongest operating discipline around AI: how to evaluate it, govern it, instrument it, constrain it, and redesign work around it.

From AI Noise to Real Wisdom for AI Value Creation

This is where the conversation has to change.

The answer to AI overload is not more AI content. It is real wisdom. And in the AI age, real wisdom does not come from reading one more article, watching one more webinar, or memorizing one more framework diagram. It comes from practice. It comes from seeing what actually works in the field, what fails under pressure, what scales, what breaks, and what must be engineered differently when AI moves from demo to production.

That is why this moment calls for more than AI fluency. It calls for a real discipline.

Agentic Engineering Institute (AEI) is built around that need. It advances Agentic Engineering as the engineering discipline for building, governing, and operating agentic systems in real enterprise conditions. Its foundation is not theory assembled at a distance. It is drawn from 600+ real-world AI deployments across regulated and mission-critical environments, where success is measured not by how impressive a demo looks, but by whether the system creates reliable value in the real world.

That distinction matters. The market is already saturated with AI explainers, AI hot takes, AI slop, and endlessly recycled commentary about tools, models, and trends. What organizations actually need is something far rarer: code-of-practice standards that capture real-world best practices, hard-earned lessons, field-tested patterns, operational safeguards, and the anti-patterns that quietly destroy value when autonomy meets enterprise reality.

This is the role AEI plays. It helps turn scattered experimentation into disciplined practice. It helps leaders, architects, engineers, and enterprises separate what is durable from what is fashionable, what is production-grade from what is prototype theater, and what creates value from what merely creates noise. It brings structure to the messy realities of agentic systems: reliability, runtime governance, lifecycle discipline, trust, operating models, and the engineering controls required to make autonomous capability actually work in production.

In a world where AI knowledge is cheap, this is the scarce asset: not more information, but better judgment. Not more AI language, but better engineering discipline. Not more activity, but more real-world value creation.

The organizations that win in the AI era will not be the ones that consume the most AI content. They will be the ones that build the strongest discipline for turning AI into trusted, scalable, measurable outcomes.

That is the work of Agentic Engineering.

If you want to move beyond AI noise and build real capability, start with the discipline itself. Download AEI’s free standards, study the code-of-practice guidance, and learn from the real-world patterns, anti-patterns, and hard-earned lessons behind 600+ deployments. Because in the end, AI value will not be created by those who talk about AI the most. It will be created by those who know how to engineer it in the real world.

Free AEI Newsletters

Expert insights and updates on Agentic Engineering—delivered straight to your inbox.