- Tuesday

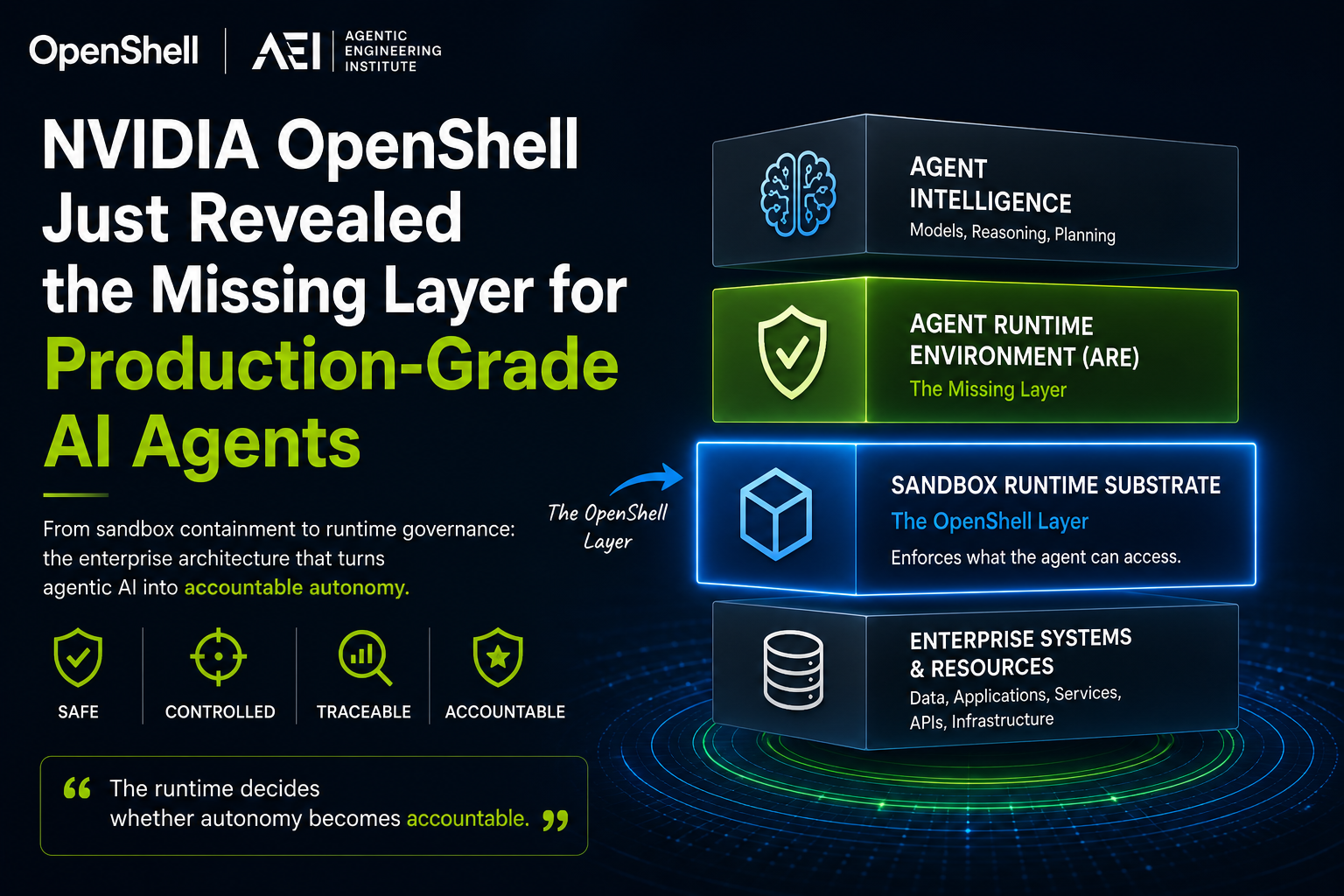

NVIDIA OpenShell Just Revealed the Missing Layer for Production-Grade AI Agents

In 2026, OpenClaw’s biggest promise became its biggest warning sign.

Security researchers found malicious “skills” uploaded to ClawHub, the plugin marketplace for OpenClaw. Some were disguised as crypto trading or wallet automation tools. They were not harmless prompts or templates. They were executable extensions that could interact with a user’s local system and network, and the reported goal was to harvest browser and cryptocurrency wallet data. One dangerous instance was reportedly even featured on ClawHub’s front page, giving it more exposure before the risk was understood.

That is the agentic AI problem in one story.

OpenClaw became popular because it was not just another chatbot. It was a self-hosted AI assistant designed to run on a user’s own devices, connect to familiar communication channels, and perform real tasks as an always-on agent. That made it powerful. It also meant every plugin, permission, shell action, local file, credential, and network connection could become part of the agent’s operational surface.

Then came the OpenClaw fever in China. Reuters reported that OpenClaw rapidly gained traction, passing 100,000 GitHub stars and drawing 2 million visitors in a single week. Chinese cloud providers began offering hosting services, and local governments promoted the project. But regulators also warned that improper OpenClaw configuration could lead to cyberattacks and data breaches.

That is what made NVIDIA OpenShell so important.

NVIDIA’s response to OpenClaw was not just another model, prompt framework, or orchestration layer. NemoClaw installs OpenShell to provide open models and an isolated sandbox for OpenClaw, adding data privacy and security controls while enforcing policy-based security, network, and privacy guardrails. NVIDIA explicitly describes this as the missing infrastructure layer beneath claws.

That move says the quiet part out loud:

The next bottleneck in agentic AI is not just intelligence. It is runtime control.

OpenClaw showed how fast autonomous agents can move from developer excitement to real operational risk. OpenShell revealed the missing layer: agents need enforceable boundaries between cognition and the environment.

Prompting can tell an agent what it should not do. Runtime enforcement decides what the agent cannot do.

For enterprises, however, that missing layer is bigger than sandboxing alone. A sandbox can limit what an agent can access, but production-grade AI agents also need an Agent Runtime Environment, or ARE: the execution layer that constrains, validates, governs, and traces every agent run so autonomy becomes safe, repeatable, and accountable.

OpenShell is a major signal. But a sandbox is not the whole runtime. The enterprise challenge is understanding where OpenShell helps, where it stops, and what must be engineered around it.

The OpenClaw Lesson: Autonomy Needs a Boundary Before It Needs More Power

OpenClaw’s lesson is not that autonomous agents are too risky to use.

It is that useful autonomy changes the engineering problem.

The more an agent can do, the less safety can depend on the agent’s own judgment. More tools, more plugins, more persistence, and more integrations do not just create more capability. They create more surface area where intent can drift from execution.

That is why “make the agent smarter” is not a complete production strategy.

A smarter agent may reason better, but it still needs boundaries that exist outside the model. It needs an environment where permissions are explicit, actions are constrained, and risky behavior can be blocked before it becomes system behavior.

This is the real OpenClaw lesson:

Autonomy needs containment before it needs more power.

That is why NVIDIA’s OpenShell response matters. OpenShell does not merely extend OpenClaw. It changes the architectural conversation from agent capability to runtime control.

The industry has spent the last two years asking:

How do we make agents more powerful?

OpenClaw forces the harder enterprise question:

How do we make agents bounded enough to trust?

That question is where production-grade agentic AI begins.

What OpenShell Gets Right

If OpenClaw’s lesson is that autonomy needs a boundary, OpenShell is NVIDIA’s attempt to turn that boundary into infrastructure.

That is what makes it important.

OpenShell does not ask the agent to become safer through better intentions. It changes the environment around the agent. NVIDIA describes OpenShell as an open-source runtime for autonomous AI agents that combines sandboxed execution with declarative YAML policy, so teams can run agents without giving them unrestricted access to local files, credentials, and external networks.

That design choice matters because production agents are not just conversations. They are operating workloads. They use tools, invoke models, connect to services, modify state, and run across longer execution windows. Once agents behave like workloads, they need workload-level containment.

OpenShell gets three things especially right.

First, it makes the boundary external to the agent. The agent is not trusted to define the limits of its own behavior. The runtime enforces those limits outside the model, which is exactly where safety must move when agents become persistent and tool-using.

Second, it turns access into policy. OpenShell’s architecture includes a sandbox, a policy engine, and a privacy router. NVIDIA describes the policy engine as enforcing constraints across filesystem, network, and process layers, while the privacy router manages model routing based on cost and privacy policy. This is important because enterprise control needs to be reviewable, versionable, and auditable. A policy can be inspected. A prompt instruction is not enough.

Third, it creates evidence at the infrastructure layer. NVIDIA’s OpenShell materials emphasize audit trails for allow and deny decisions, which gives security and platform teams something concrete to investigate when an agent behaves unexpectedly. In production, the question is not only whether the agent succeeded. It is what the agent tried, what the runtime allowed, what it denied, and why.

That is the real design win.

OpenShell does not merely make OpenClaw easier to deploy. It changes the trust model around OpenClaw-like agents. Instead of assuming the agent will self-regulate, it places the agent inside a governed execution environment.

For enterprise AI, that is a major step forward.

But it also raises the next question:

If OpenShell controls what the agent can touch, who controls how the agent executes?

That question is where the difference between a sandbox and a full Agent Runtime Environment becomes critical.

The Missing Layer Is the Agent Runtime Environment

OpenShell reveals the missing layer, but the layer itself is bigger than sandboxing.

That layer is the Agent Runtime Environment, or ARE.

For readers new to the terminology, AEI, the Agentic Engineering Institute, is the professional body defining Agentic Engineering as a discipline for production-grade agentic systems.

AEBOP™, the Agentic Engineering Body of Practice, is AEI’s standards and practice system for designing, operating, and governing agentic AI in enterprise environments. ARE is one of its foundational runtime disciplines.

AEBOP defines the ARE formally as:

The execution substrate that constrains, validates, and governs every agent run.

C002: Agent Runtime Environment

Its role is to ensure that cognition, regardless of model, prompting strategy, or domain, operates inside a controlled, bounded, and auditable environment. AEBOP defines five essential ARE capabilities: execution boundaries, lifecycle control, tool-call controls, invariant enforcement and safety gates, and runtime trace emission.

This is where the distinction becomes clear.

OpenShell helps answer: What can the agent touch?

The ARE answers: How is the agent allowed to execute?

That second question is what makes agents production-grade.

The reason ARE matters is simple: once an agent can act, intelligence is no longer enough. The system needs an execution layer that can turn open-ended cognition into bounded behavior.

A production-grade ARE must do five things.

First, it bounds the run. Every agent run needs hard limits on steps, time, depth, tokens, cost, retries, and replans. Without these limits, autonomy can silently become runaway execution.

Second, it controls the lifecycle. Agent execution cannot be a free-form stream of planning, acting, validating, and retrying. A production runtime must know which phase the agent is in, which transitions are legal, and which actions are forbidden at each stage.

Third, it wraps every tool call. Tools are where cognition becomes action. Every invocation should pass through input validation, output validation, timeout rules, retry budgets, fallback paths, and normalized error handling.

Fourth, it enforces invariants. After every step, the runtime must check whether execution is still safe. Is the budget intact? Is the step count valid? Is the agent making progress? Is it looping? Has a safety threshold been breached?

Fifth, it emits runtime evidence. Every phase transition, tool call, contract result, invariant check, safety event, and termination should produce a structured trace. If an enterprise cannot reconstruct what happened, it cannot govern the agent.

That is why ARE is different from a sandbox.

A sandbox controls the environment around the agent.

An ARE controls the execution of the agent.

OpenShell is valuable because it gives enterprises a serious substrate for environmental containment. But production-grade agentic AI also needs the ARE layer above that substrate: the layer that determines how cognition moves through a bounded, validated, and auditable execution path.

The simplest distinction is this:

OpenShell = infrastructure containment

ARE = execution governanceThat is the missing production-grade layer.

Agents will change. Models will change. Sandboxes will change. But the enterprise requirement will remain stable:

Autonomy must run inside a bounded, validated, and traceable execution environment.

The Enterprise Pattern: Sandbox-Backed ARE

The practical takeaway is not that every enterprise should standardize on OpenShell.

The takeaway is that every production-grade agent needs a sandbox-backed Agent Runtime Environment.

Figure-1. The Enterprise Control Architecture for Production-Grade AI Agents (From AEBOP T1.1)

OpenShell shows one way to implement the infrastructure containment layer. AEBOP ARE defines the broader execution control architecture that must sit above it. Together, they point to the enterprise pattern that will likely become standard for serious agentic systems.

Cognition / Agent Logic

↓

Agent Runtime Environment

- Execution Envelope

- Lifecycle Controller

- Tool Contract Layer

- Invariant & Safety Engine

- Runtime Trace Emitter

↓

Sandbox Runtime Substrate

- Filesystem Policy

- Network Policy

- Process Isolation

- Credential Boundary

- Inference Routing

- Sandbox Logs

↓

Enterprise Systems / APIs / Models / FilesThis pattern creates a clean separation of responsibility.

The agent proposes what to do.

The ARE decides whether the proposed execution path is valid, bounded, contract-compliant, and traceable.

The sandbox substrate enforces what the executing process can access in the environment.

The enterprise governance layer defines which policies, limits, approvals, and evidence requirements apply based on business risk.

That separation matters because production-grade agents are not owned by one team. AI engineering may build the agent. Platform engineering may operate the runtime. Security may define access boundaries. Governance may require evidence. Business owners may define authority limits. Audit may need to reconstruct what happened after the fact.

A sandbox-backed ARE gives these teams a shared control architecture.

For security teams, it provides enforceable boundaries around files, credentials, networks, and processes.

For platform teams, it creates a repeatable runtime substrate instead of one-off agent deployments.

For AI engineering teams, it gives a standard way to handle lifecycle, tools, retries, invariants, and traces.

For governance teams, it converts agent behavior into evidence that can be reviewed, tested, and audited.

For business leaders, it creates a path from impressive demos to accountable autonomy.

That is why this pattern matters.

Without a sandbox, the agent may have too much environmental access.

Without an ARE, the agent may still execute unpredictably inside the access it is allowed to have.

Without governance, neither layer is tied to business authority, risk, or accountability.

The enterprise architecture should therefore be:

Agent capability

+ ARE execution governance

+ sandbox infrastructure containment

+ enterprise authority and risk policy

= production-grade agentic AIOpenShell is important because it makes the sandbox substrate more concrete. It shows how autonomous agents can be placed inside policy-governed execution environments instead of running directly against the host system.

AEBOP ARE is important because it defines what must happen above that substrate: bounded execution, lifecycle discipline, tool-contract enforcement, invariant checks, safety interruption, and runtime traceability.

The future production pattern is not model-first.

It is runtime-first.

Enterprises will still compete on models, workflows, data, and user experience. But the organizations that scale agentic AI safely will be the ones that can answer a deeper question:

Can we prove that every autonomous action ran inside a bounded, validated, and governed execution environment?

That is what separates an agent demo from a production-grade agent system.

The Runtime Is Becoming the New Moat

OpenShell matters because it signals a larger shift in agentic AI.

The first wave was model-centric: better reasoning, larger context windows, stronger benchmarks, more impressive demos.

The next wave will be runtime-centric.

As AI moves from assistants to agents, and from responses to actions, the hard problem is no longer just intelligence. It is controlled execution inside real environments.

That is why runtime is becoming the new moat.

A model can be swapped.

An agent framework can be replaced.

A prompt pattern can be copied.

But a production-grade runtime control architecture is much harder to replicate because it spans engineering, security, governance, operations, compliance, and business accountability.

OpenShell is an important signal because it makes infrastructure containment concrete. But the enterprise bar is higher than containment.

Production-grade AI agents need a full Agent Runtime Environment: execution boundaries, lifecycle control, tool contracts, invariant enforcement, safety interruption, runtime administration, and step-level evidence.

That is why AEBOP matters.

AEBOP turns runtime control from a product feature into an engineering discipline. It defines what must exist structurally for agentic systems to become safe, repeatable, auditable, and scalable in enterprise environments.

OpenShell revealed the missing layer.

AEBOP ARE defines what that layer must become.

The model may reason.

The agent may act.

But the runtime decides whether autonomy becomes accountable.

If you are building, deploying, or governing AI agents, now is the time to study AEBOP, join AEI, and engineer the runtime before the agent becomes the risk.

Free AEI Newsletters

Expert insights and updates on Agentic Engineering—delivered straight to your inbox.