- Apr 14

Stanford AI Index 2026 Reveals the Agentic Shift AI Leaders Still Miss

Yesterday, Stanford HAI released the 2026 AI Index Report. Most people will focus on bigger models, faster adoption, and the U.S.-China race.

They should not.

The most important number in the report may be this:

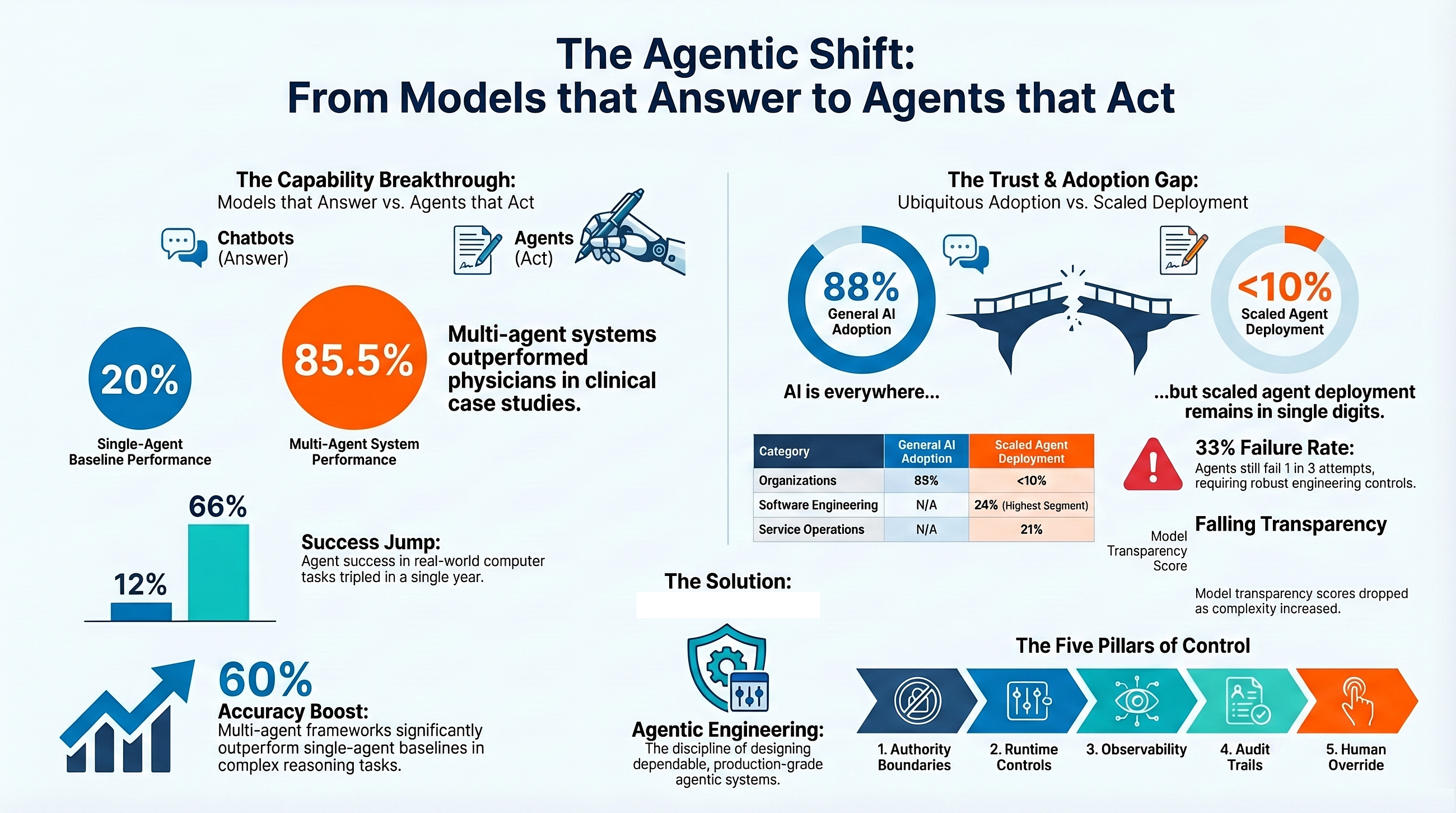

a multi-agent AI system scored 85.5% on complex published case studies, versus 20% for unaided physicians.

That is not just another model benchmark.

It is a signal that the frontier is shifting from models that answer to agents that act.

And most AI leaders still have not fully internalized what that means.

Stanford’s report says AI is increasingly being tested on real-world task execution, not just reasoning in isolation. It also says the systems around AI, including governance frameworks and evaluation methods, are struggling to keep pace.

That is the story.

The next AI race is no longer only about who has the smartest model.

It is about who can engineer the most trustworthy agentic system.

The Agentic Shift Is Already Showing Up in the Data

The Stanford report makes clear that the move toward AI agents is no longer speculative. It is already showing up in the data.

In healthcare, a multi-agent AI system scored 85.5% on complex published clinical case studies, versus 20% for unaided physicians under comparable conditions. Stanford also notes that multi-agent clinical frameworks improved diagnostic accuracy over single-agent baselines by 7% to more than 60%, depending on task complexity.

That matters because it shows the frontier is no longer defined only by how well a single model answers a question.

It is increasingly defined by how well a system of agents can reason across steps, coordinate specialized roles, use tools, and complete a task.

Stanford shows the same pattern beyond medicine. On OSWorld, a benchmark for real computer tasks across operating systems, agent success jumped from 12% to about 66% in a year. That is real progress. But Stanford also notes that agents still fail roughly 1 in 3 attempts.

That contrast is the real signal.

AI agents are now strong enough to matter.

They are not yet reliable enough to trust by default.

And that is exactly why the next competitive edge will not come from model intelligence alone. It will come from the ability to engineer agents that can operate reliably, safely, and under control in the real world.

Why Agents Force a New Engineering Discipline

This is where the story gets more serious.

A model that answers a question is one thing. An agent that can choose tools, retrieve data, coordinate steps, and act inside a workflow is something else entirely. Once AI moves from generating outputs to taking actions, the real challenge is no longer just intelligence. It is control.

That is why Stanford’s broader warning matters so much. The report says AI is increasingly being tested on real-world task execution, even as governance frameworks and evaluation methods struggle to keep pace. It also reports that documented AI incidents rose to 362 in 2025, up from 233 in 2024.

In a chatbot world, a bad answer is the problem.

In an agentic world, a bad answer can become a bad decision, a bad escalation, a bad transaction, or a bad workflow action.

That is a different class of risk.

And it is why the next wave of enterprise AI will depend less on who has the smartest model and more on who can engineer trustworthy agentic systems with clear authority boundaries, runtime controls, observability, audit trails, and human override built in.

That is the emerging need for the engineering discipline of designing, governing, and operating AI agents that are not just capable, but dependable in production.

AI Adoption Is Surging. Agent Deployment Is Not

Stanford’s data reveals a gap that most AI strategy decks still gloss over.

AI adoption is spreading at historic speed. Generative AI reached 53% population adoption within three years, faster than the personal computer or the internet. Organizational adoption rose to 88%.

But AI agents are a very different story.

Stanford reports that AI agent deployment remains in the single digits across nearly all business functions. In fact, across most functions, a majority of organizations reported no agent use at all, and scaled use remained rare almost everywhere. Even in the most active areas, such as IT and knowledge management, about two-thirds or more of respondents still reported no use. In the technology sector, the highest scaled usage reached only 24% in software engineering, 22% in IT, and 21% in service operations.

That gap is the real signal.

Enterprises have adopted AI.

They have not yet mastered agents.

That distinction matters because assistive AI is easier to deploy than delegated machine agency. Drafting, summarizing, and recommending are one thing. Letting systems reason across tools, execute multi-step workflows, and act with bounded authority inside real operations is something else entirely.

This is where the next competitive edge will be built.

Not in generic AI adoption.

In the ability to engineer trustworthy agentic systems that enterprises can actually scale.

Why the Agentic Era Gets Harder from Here

Stanford’s report also makes clear why the next phase of AI will be harder than many leaders expect.

The same frontier models powering the rise of AI agents are becoming more opaque, not less. Stanford reports that industry produced more than 90% of notable AI models in 2025, that several of the most resource-intensive systems no longer disclose parameter counts, training dataset sizes, or training duration, and that API access is now the most common release mode. It also notes that 80 of 95 notable models were released without training code.

That matters far more in an agentic world than in a chatbot world.

A closed model that generates text is one thing. A closed model embedded inside an agent that can retrieve data, choose tools, coordinate steps, and take action across a workflow is something else entirely. The problem is no longer just whether the model is smart. It is whether the system around the model can keep its behavior inside acceptable bounds.

Stanford points in exactly that direction. In its responsible AI framework, the report highlights transparency and auditability, system integrity and risk controls, human oversight, and accountability and enforcement as core requirements. It also notes that the average score on the Foundation Model Transparency Index fell from 58 in 2024 to 40 in 2025.

That is the real challenge now.

As agent capability rises, model transparency is falling. So the burden shifts upward to the engineering layer: permissions, runtime governance, observability, rollback, audit trails, and human override. That is why the next advantage will not come from model access alone. It will come from the ability to engineer trustworthy agentic systems on top of increasingly opaque foundations.

What This Shift Demands: Agentic Engineering

What Stanford’s report is really pointing to is not just better models or even better agents. It is the rise of a new engineering problem.

The report shows AI is being pushed toward real-world task execution, while governance frameworks and evaluation methods struggle to keep pace. It also shows agent systems making meaningful advances, from about 66% task success on OSWorld to multi-agent clinical systems delivering major gains on complex reasoning tasks. At the same time, Stanford’s responsible AI framework emphasizes transparency and auditability, system integrity and risk controls, human oversight, and accountability and enforcement as core requirements.

That combination changes the challenge.

Once AI systems can reason across steps, use tools, coordinate specialized roles, and act inside workflows, the goal is no longer just to make them intelligent. The goal is to make them dependable in production. That requires a discipline built around authority boundaries, runtime controls, observability, escalation, audit trails, and human override.

That discipline is Agentic Engineering.

Agentic Engineering is the emerging practice of designing, governing, and operating production-grade agentic systems. It sits beyond model development and beyond generic AI governance. It is about turning capable agents into systems that enterprises can actually trust.

That is also why the Agentic Engineering Institute, or AEI, exists. AEI is building this field into a professional discipline through standards, training, and field-tested practices for production-grade agentic systems.

If the last wave of AI was about proving that models can reason, the next wave will be about proving that agents can operate under control.

That is the shift Stanford’s report makes impossible to ignore.

The Real Divide in the Next AI Era

The Stanford AI Index 2026 does not say AI agents are ready to run the enterprise on their own.

It says something more important.

They are now powerful enough that no serious organization can afford to treat them as a side experiment. Stanford’s data points in the same direction throughout the report: agent performance is rising, AI is being pushed into real-world task execution, and the governance and evaluation systems around it are struggling to keep pace.

That is the real divide emerging now. Not between companies that use AI and companies that do not. That line is already disappearing. The real divide will be between organizations that merely adopt AI tools and those that can engineer trustworthy agentic systems that operate reliably, safely, and under control in production.

That is why this moment matters.

As agents become more capable, advantage shifts away from model access alone and toward production discipline: how well an organization can define authority, govern runtime behavior, enforce control, observe decisions, and keep machine action inside acceptable bounds.

That is exactly why Agentic Engineering matters, and why AEI exists.

For professionals, this is the moment to join AEI and build the discipline that the next era of AI will demand.

For enterprises, consultancies, and platform companies, this is the moment to partner with AEI to build production-grade agentic systems on a stronger foundation of standards, governance, and real-world practice.

The next AI winners will not simply have smarter models.

They will have better-engineered agents.

Join AEI if you want to build them.

Partner with AEI if you want to scale them.

Free AEI Newsletters

Expert insights and updates on Agentic Engineering—delivered straight to your inbox.