- May 1

Vanilla Agentic AI: Why AI Coding Rewrites the Build-vs-Buy Equation for Enterprise Agents

Over the past few months, I have heard the same question from CIOs, CTOs, and enterprise AI leaders:

Should we buy an agentic AI platform, or build our own?

The question sounds familiar. The pressure behind it is not.

Boards want an agentic AI strategy. Business units want agents in production. Vendors are arriving with polished demos and confident roadmaps. Engineering teams are discovering that AI coding can turn weeks of work into days. Risk teams are asking harder questions about governance, security, auditability, and control.

For decades, the answer would have been obvious: buy the platform.

Custom development was slow. Engineering talent was expensive. Integration was painful. Vendor platforms reduced cost, compressed timelines, and gave executives a safer path forward.

But agentic AI breaks that logic.

When AI makes coding cheaper, the hardest problem is no longer creating the first agent. The harder problem is controlling what the agent can do, what context it can use, which tools it can invoke, how failures are contained, and how the enterprise proves what happened.

That changes the build-vs-buy equation.

Buy too early, and you may move fast while renting the wrong operating model. Build blindly, and you may recreate platform complexity without the discipline to govern it.

The smarter path is not anti-platform.

It is platform independent.

That is the idea behind Vanilla Agentic AI:

Use platforms where they help. Own the control architecture where it matters.

1. The Old Equation Was Built for Expensive Code

The traditional build-vs-buy equation was never really about platforms. It was about economics.

Enterprises bought platforms because building software required expensive engineering labor, long delivery cycles, specialized infrastructure, integration work, security reviews, deployment pipelines, admin tooling, documentation, training, maintenance, and support. A platform bundled many of those costs into a product. Even when the platform was imperfect, the economic logic was clear: buying reduced the pain of creation.

That logic worked because code was scarce and expensive.

A vendor platform could win by offering prebuilt workflows, reusable components, standard connectors, permission models, dashboards, and lifecycle tooling. The more expensive custom development became, the more attractive the platform looked. In that world, platform abstraction was not just a technical convenience. It was an economic advantage.

But that advantage is now under pressure.

At Google Cloud Next 2026, Sundar Pichai said that 75% of all new code at Google is now AI-generated and approved by engineers, up from 50% last fall. He also said Google is moving toward “truly agentic workflows,” where engineers orchestrate autonomous digital task forces. One complex code migration, done by agents and engineers working together, was completed six times faster than what was possible a year earlier with engineers alone.

OpenAI is seeing the same structural shift. Business Insider reported that OpenAI President Greg Brockman said agentic coding tools moved from writing about 20% of developers’ code to 80% within a few months, while emphasizing that humans remain responsible for what gets merged.

This is the data point that changes the build-vs-buy discussion.

For decades, the strongest platform argument was speed: do not build it yourself because it will take too long. But if AI coding can generate scaffolding, adapters, tests, documentation, internal tools, workflow logic, and integration glue far faster than traditional development, then speed alone is no longer enough to justify platform dependency.

The question is no longer whether a vendor platform can help you build faster. Of course it can.

The question is whether the platform still gives you better economics once AI has reduced the cost of custom development.

That is where the old equation begins to break. It was designed for a world where building software was the expensive part. Agentic AI is creating a world where building may be cheap, but the architecture around autonomy becomes decisive.

2. When Code Becomes Abundant, Control Becomes Scarce

The first mistake is to treat AI coding as only a developer productivity story.

It is bigger than that.

AI coding changes where the bottleneck lives. Once agents can generate code, edit files, run tests, fix bugs, answer codebase questions, and propose pull requests, the scarce resource is no longer only engineering labor. The scarce resource becomes the system around the agent: the environment, the task structure, the validation loop, the review model, the orchestration layer, and the controls that determine whether generated work can be trusted.

OpenAI’s Codex work makes this shift visible. Codex operates as a software engineering agent that can work in isolated cloud environments, handle multiple tasks in parallel, write features, answer questions about a codebase, fix bugs, and propose pull requests for review.

But the deeper lesson comes from OpenAI’s own harness engineering experience. A small team started with an empty repository in August 2025. Five months later, the repository contained roughly one million lines of code across application logic, infrastructure, tooling, documentation, and internal developer utilities. About 1,500 pull requests were opened and merged by a small team of three engineers driving Codex.

The headline is impressive. The hidden lesson is more important.

OpenAI said early progress was slower than expected not because Codex was incapable, but because the environment was underspecified. The agent lacked the tools, abstractions, and internal structure required to make progress toward high-level goals. The engineering team’s primary job became enabling agents to do useful work by designing systems, scaffolding, and feedback loops around them.

That is the real shift. The work moves from writing every line of code to engineering the conditions under which agents can produce useful, reviewable, testable, and trusted work.

OpenAI’s Symphony work shows the next bottleneck. As engineers ran more Codex sessions, context switching became painful. OpenAI wrote that most people could comfortably manage only three to five sessions before productivity dropped, so it built Symphony to turn a project-management board into a control plane for coding agents. The result was a reported 500% increase in landed pull requests on some teams.

This is the pattern enterprise leaders should pay attention to.

AI coding accelerates creation, but acceleration creates a new management problem.

More generated work means more review.

More parallel agents mean more orchestration.

More autonomy means more need for evidence, traceability, policy, rollback, and human judgment.

That is why the build-vs-buy equation changes. A platform may help create agents faster, but the durable advantage comes from engineering the control system around those agents.

The new bottleneck is not coding. It is control.

3. Platform Fatigue Is the Symptom, Not the Disease

Once code generation accelerates and control becomes the bottleneck, the next thing that happens is predictable: the market rushes to package the bottleneck.

That is what we are seeing now.

Every major enterprise AI conversation seems to come with a new agent platform, agent studio, AI workforce, workflow agent, orchestration layer, low-code builder, governance console, or “agentic transformation” offering. The demos are polished. The roadmaps are confident. The language is converging: faster agents, safer agents, governed agents, enterprise-ready agents.

Some of these platforms are valuable. Some will become important parts of the enterprise AI stack. The issue is not that platforms are useless. The issue is that the market is trying to sell certainty before the operating model has stabilized.

That is why platform fatigue is emerging.

It is not because enterprises are rejecting agentic AI. Gartner predicts that by 2028, 33% of enterprise software applications will include agentic AI, up from less than 1% in 2024. Agentic AI is clearly moving into the enterprise software mainstream.

But Gartner also predicts that over 40% of agentic AI projects will be canceled by the end of 2027 because of escalating costs, unclear business value, or inadequate risk controls. The same analysis warns that many initiatives are early-stage experiments or proofs of concept driven by hype, not durable production economics.

That contradiction explains the fatigue.

Enterprises do not lack agentic AI ambition. They lack confidence that today’s platform-centric answers can solve tomorrow’s production-grade problems.

This is also why “agent washing” matters. When every automation tool, workflow product, chatbot, and low-code builder is rebranded as agentic AI, buyers lose signal. The more every vendor claims to be the platform for enterprise agents, the harder it becomes for CIOs to separate useful acceleration from architectural dependency.

The fatigue is not emotional. It is economic.

CIOs are asking a different question now:

If AI coding makes custom development faster, and if production agents still require deep control over context, tools, governance, observability, and failure recovery, why should the proprietary platform be the default center of the architecture?

That is the real disease beneath the symptom.

Platform fatigue is not a rejection of agentic AI. It is a reaction to the gap between agent demos and production economics.

4. The Platform Trap: Faster Start, Higher Lifecycle Cost

Agentic AI platforms are attractive because they combine technical convenience with economic acceleration.

Need agents? There is a platform.

Need orchestration? Another platform.

Need governance? Another platform.

Need integration? Another platform.

Need scale? Another platform.

That is the promise and the problem. Each platform can reduce friction locally, but enterprises rarely end up with one clean agentic AI platform. They end up with overlapping agent builders, workflow tools, orchestration layers, connectors, governance dashboards, and monitoring systems.

The result is a TCO problem.

Agentic AI TCO = Build Cost + Integration Cost + Runtime Cost + Governance Cost + Change Cost + Evidence Cost + Failure Cost + Switching Cost + Talent Cost

Platforms often win on Build Cost. They help teams launch the first demo, configure the first workflow, connect the first system, and show the first executive update faster.

But the five platform problems show up across the rest of the lifecycle.

1. Platform overpromise increases Failure Cost.

Many platforms imply that agentic AI is mostly a product-selection problem: choose the tool, configure the agent, connect the systems, and scale. But production-grade agents require runtime governance, failure recovery, evidence trails, observability, context control, and workflow-specific authority. When platforms overpromise, enterprises underestimate the production work and carry more failure risk.

2. AI platform washing increases Runtime and Governance Cost.

Some “agentic AI platforms” were not designed for agentic AI at all. They are legacy AI platforms, automation tools, RPA suites, low-code builders, or model-serving layers relabeled for the agentic wave. They may support workflows, prompts, models, or integrations, but lack the runtime architecture required for delegated action, memory, context control, tool-use governance, failure containment, and agentic observability. This is AI platform washing: selling an existing AI or automation platform as an agentic AI platform without the control architecture agentic systems require.

3. Platform fragmentation increases Integration Cost.

Each platform solves a local problem, but the enterprise inherits the cross-platform complexity. Salesforce’s 2026 Connectivity Benchmark makes this visible: 50% of AI agents operate in isolated silos, only 54% of organizations have a centralized governance framework, and 96% agree that agent success depends heavily on seamless, debt-free data integration. The broader Salesforce/MuleSoft discussion also reports that only 27% of enterprise applications are integrated, even as organizations are moving toward agentic transformation.

4. Misplaced control increases Governance and Evidence Cost.

This may be the most dangerous problem. In production-grade agentic AI, some controls should belong to the enterprise, not the platform: runtime authority, tool permissions, escalation rules, evidence standards, risk thresholds, audit logic, failure response, and autonomy boundaries. If those controls live mainly inside a vendor platform, the enterprise may be renting the very operating model it needs to own.

5. Lock-in disguised as acceleration increases Change and Switching Cost.

The first workflow may launch faster, but the enterprise may become dependent on vendor-specific orchestration, connectors, policy models, logs, APIs, skills, and release cycles. Over time, the real cost is not just subscription fees. It is the cost of changing workflows, switching models, integrating new systems, proving compliance, and exiting the platform.

That does not make platforms bad. It makes platform dependency risky.

The better question is not:

Which platform should we buy for agentic AI?

The better question is:

What architecture gives us the lowest risk-adjusted TCO across build, integration, runtime, governance, change, evidence, failure, switching, and talent?

That is the platform trap: faster start, but potentially higher lifecycle cost if the enterprise does not own the control architecture.

5. Vanilla Agentic AI: Own the Control Architecture

The answer to the platform trap is not to reject platforms. It is to stop making the platform the default center of the architecture.

Vanilla Agentic AI is a vendor-neutral architecture and development approach for building agentic systems directly on models, APIs, enterprise systems, open protocols, code, and engineered runtime controls, while keeping proprietary agent platforms optional, modular, and replaceable.

The principle is simple:

Buy acceleration. Own control.

Platforms may provide model access, workflow surfaces, connectors, deployment tooling, or governance features. But the enterprise should own the control architecture: runtime authority, tool-use policy, context governance, observability, evidence, escalation, failure response, and production-readiness criteria.

That distinction changes the starting question.

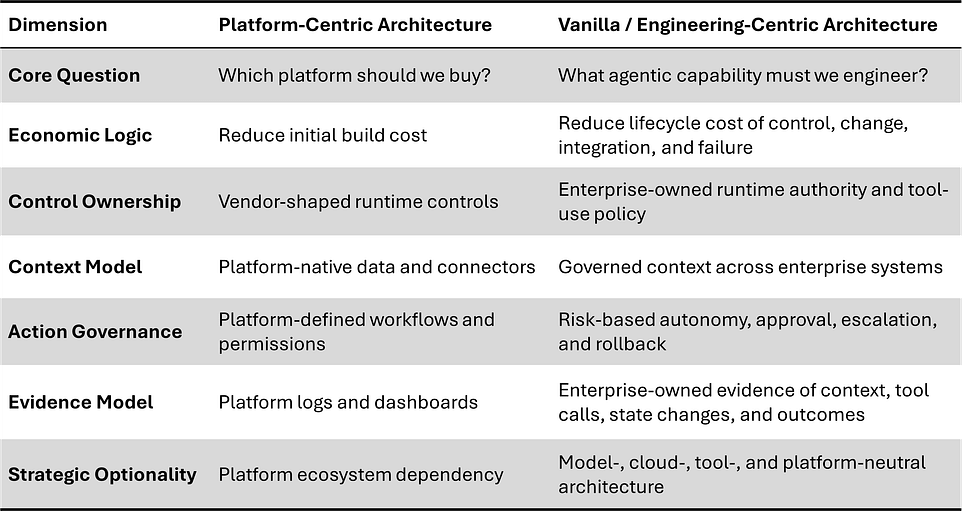

Table-1: The Agentic Architecture Ownership Matrix

This matters because enterprise agents are hard. Salesforce’s SCUBA benchmark found that agent performance on CRM workflows varied sharply by design: in zero-shot settings, some open-source-model-powered agents achieved less than 5% task success, while closed-source-model methods reached up to 39%; demonstration-augmented settings improved success to 50%. CRMArena found that state-of-the-art LLM agents succeeded in less than 40% of realistic CRM tasks with ReAct prompting and less than 55% even with function-calling abilities.

Those numbers are not arguments against platforms. They are arguments against shallow architecture.

In my experience, the durable advantage in enterprise AI rarely comes from owning every technical component. It comes from knowing which control points cannot be surrendered. A model can be swapped. A connector can be replaced. A workflow tool can change. But if the enterprise gives up control over runtime authority, context governance, tool-use policy, evidence, and failure response, it has outsourced the wrong layer.

That is the core of Vanilla Agentic AI:

Use platforms where they help. Own the architecture where it matters.

6. The New Build-vs-Buy Decision Model

Once the enterprise separates platform acceleration from control ownership, the build-vs-buy question changes.

The old question was simple: Should we build or buy?

The new question is more precise:

What should we buy for speed, what should we build for control, what should we rent for leverage, and what must remain replaceable?

That is the new build-vs-buy model for enterprise agentic AI.

Platforms usually make sense when the workflow is standard, low-risk, and mostly contained inside one vendor ecosystem. They are especially useful when the platform already owns the data, workflow surface, user experience, and admin model. In those cases, buying the platform can reduce friction and accelerate validation.

Vanilla Agentic AI becomes more attractive when the workflow is strategic, differentiated, cross-system, regulated, high-change, or mission-critical. It also becomes more important when context control, auditability, failure cost, model neutrality, and internal capability matter more than speed to first demo.

The dividing line is not platform versus no platform.

The dividing line is workflow economics.

A platform is economically attractive when the workflow is generic. Vanilla becomes economically attractive when the workflow is strategic.

The new decision model is not “build everything” or “buy everything.” It is a layer-by-layer ownership decision.

This is the practical logic of Vanilla Agentic AI.

Buy platforms for acceleration.

Rent commodity capabilities for leverage.

Build control architecture for differentiation.

Keep models, tools, and platforms replaceable.

Own runtime authority, evidence standards, governance rules, and production-readiness criteria.

The mistake is not buying platforms.

The mistake is confusing platform dependency with enterprise capability.

7. How AEI Turns Vanilla Agentic AI Into Enterprise Capability

The new build-vs-buy model creates a harder leadership question:

Who inside the organization is qualified to make these decisions?

It is one thing to say, “Buy where the workflow is generic and own where control matters.” It is another thing to assess a real workflow, evaluate platform claims, identify control gaps, define runtime authority, design evidence requirements, and decide whether an agent is ready for production.

That requires a discipline.

That discipline is Agentic Engineering:

an AI-native engineering discipline for enterprise AI, focused on the systematic design, operation, and governance of agentic systems, where cognition, runtime governance, and trust are engineered as first-class system properties.

This is the reason AEI exists. The Agentic Engineering Institute is the professional body advancing this discipline through standards, training, certification, and enterprise partnership programs. Its mission is to help professionals and organizations move beyond agentic AI experimentation and build production-grade, enterprise-grade, and regulatory-grade agentic AI capability.

In that context, Vanilla Agentic AI is not just an architecture choice. It is a capability challenge.

Agentic Engineering gives teams the methods, language, practices, and standards needed to evaluate agentic systems beyond vendor demos and pilot success. The goal is not to build everything internally. The goal is to know what must be engineered, what can be safely bought, and what should remain replaceable.

For enterprises, this changes the conversation from platform selection to production readiness. Teams can assess whether an agent has the right runtime controls, context boundaries, tool-use policies, escalation paths, testing approach, evidence model, and governance structure before it is trusted inside real workflows.

For consulting firms, systems integrators, and delivery organizations, Agentic Engineering creates a repeatable delivery discipline. Instead of selling isolated pilots or platform implementations, partners can help clients build durable agentic AI capability: architecture, controls, operating model, QA, governance, and production-readiness assessment.

For platform companies, Agentic Engineering provides a clearer standard for enterprise alignment. It helps distinguish real agentic runtime capability from agent washing and gives platform teams a way to position their products as components within production-grade enterprise architectures rather than as one-size-fits-all operating models.

Vanilla Agentic AI gives enterprises architectural independence.

Agentic Engineering gives them the discipline to use it.

AEI helps turn that discipline into capability at scale.

8. The Future Is Engineering-Centric, Not Platform-Centric

The agentic AI platform race will continue.

Some platforms will become powerful. Some will become infrastructure. Some will be absorbed into larger enterprise suites. Some will disappear. That is normal in every major technology cycle.

But the winners in enterprise agentic AI will not be the organizations that simply choose the most impressive platform.

They will be the organizations that understand the new build-vs-buy equation.

They will know what to buy for acceleration, what to rent for leverage, what to build for differentiation, and what to own for control.

That is the real shift.

The future is not platform-less. Enterprises will still use platforms, models, cloud services, APIs, workflow tools, and vendor products. But the future should not be platform-centric by default.

The issue is not whether platforms are used. The issue is who controls the agentic architecture.

In a platform-centric approach, the vendor defines too much of the runtime behavior, governance model, evidence structure, integration path, and change boundaries. In an engineering-centric approach, the enterprise owns those control points and uses platforms as components where they create leverage.

That is why Vanilla Agentic AI matters. It does not reject platforms. It rejects platform dependency as the default architecture for production-grade enterprise agents.

The build-vs-buy equation has changed.

When code was expensive, platforms won by reducing build cost. As AI makes coding cheaper, the real advantage shifts to the organizations that can engineer trustworthy autonomy: systems that can reason, act, observe, escalate, recover, and prove what happened.

That is the discipline of Agentic Engineering.

This is the moment to move beyond demos, pilots, and platform comparisons.

If you are an engineer, architect, product leader, governance leader, consultant, or executive building the next generation of enterprise AI systems, join AEI and build Agentic Engineering capability.

If you are an enterprise, consulting firm, systems integrator, delivery organization, or platform company serious about production-grade agentic AI, partner with AEI.

The platform race will continue.

But the enterprise winners will be the ones that engineer the system.

Free AEI Newsletters

Expert insights and updates on Agentic Engineering—delivered straight to your inbox.